04

Evaluation:

Comparative User Testing

~ 09.02.2025

A Quick Recap

Before I begin this entry, I would like to make it clear that the preparation and user testing was conducted on week 3, but will be documented here along with the evaluation of the results in week 4 for the sake of clarity. This week is mostly about preparing the materials and setting up the environment for the testing, then a documentation of the actual testing and feedback.

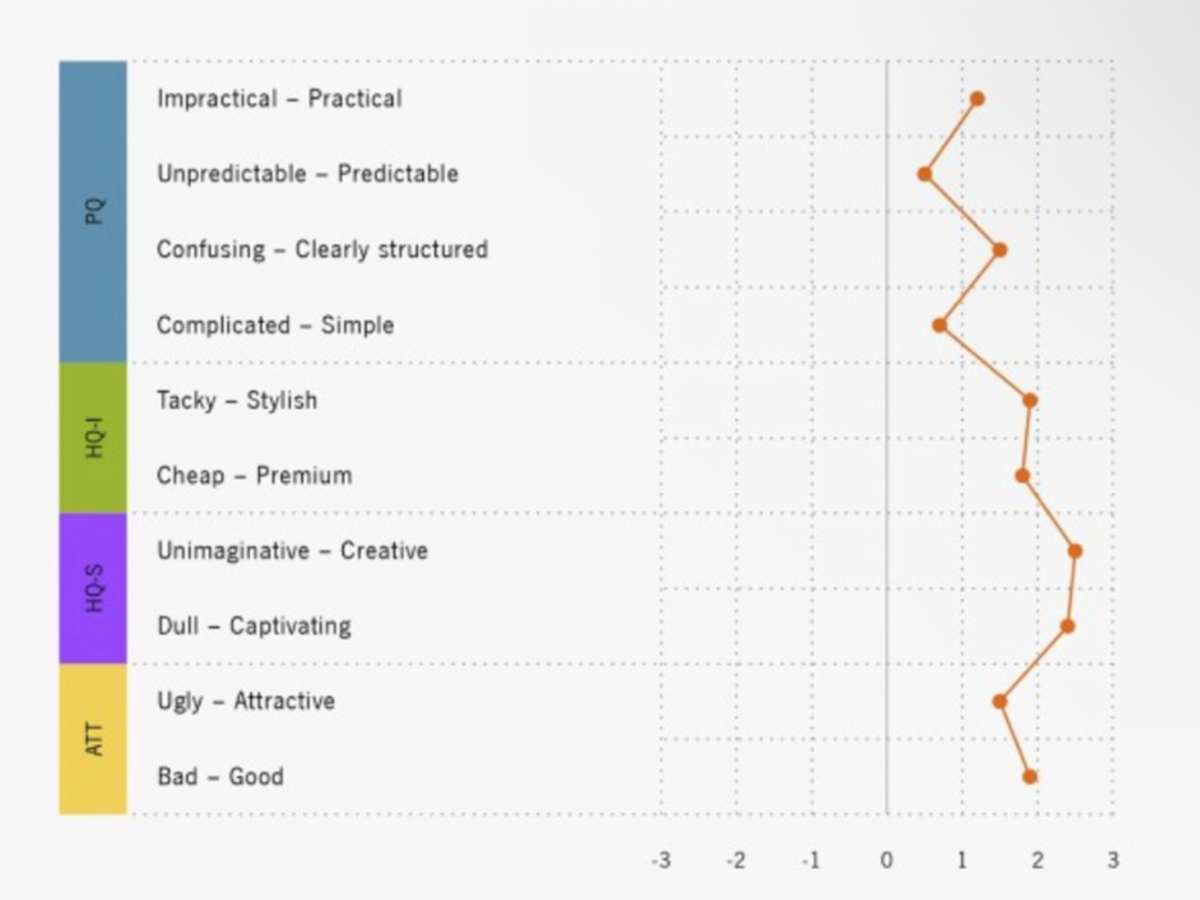

Interface Evaluation: I'll be using a quantitative approach based on existing metrics, specifically a modified version of AttrakDiff. This will assess the prototypes' pragmatic and hedonic qualities, but with a twist—more emphasis will be placed on creative value rather than the usual usability aspects when evaluating pragmatic qualities.

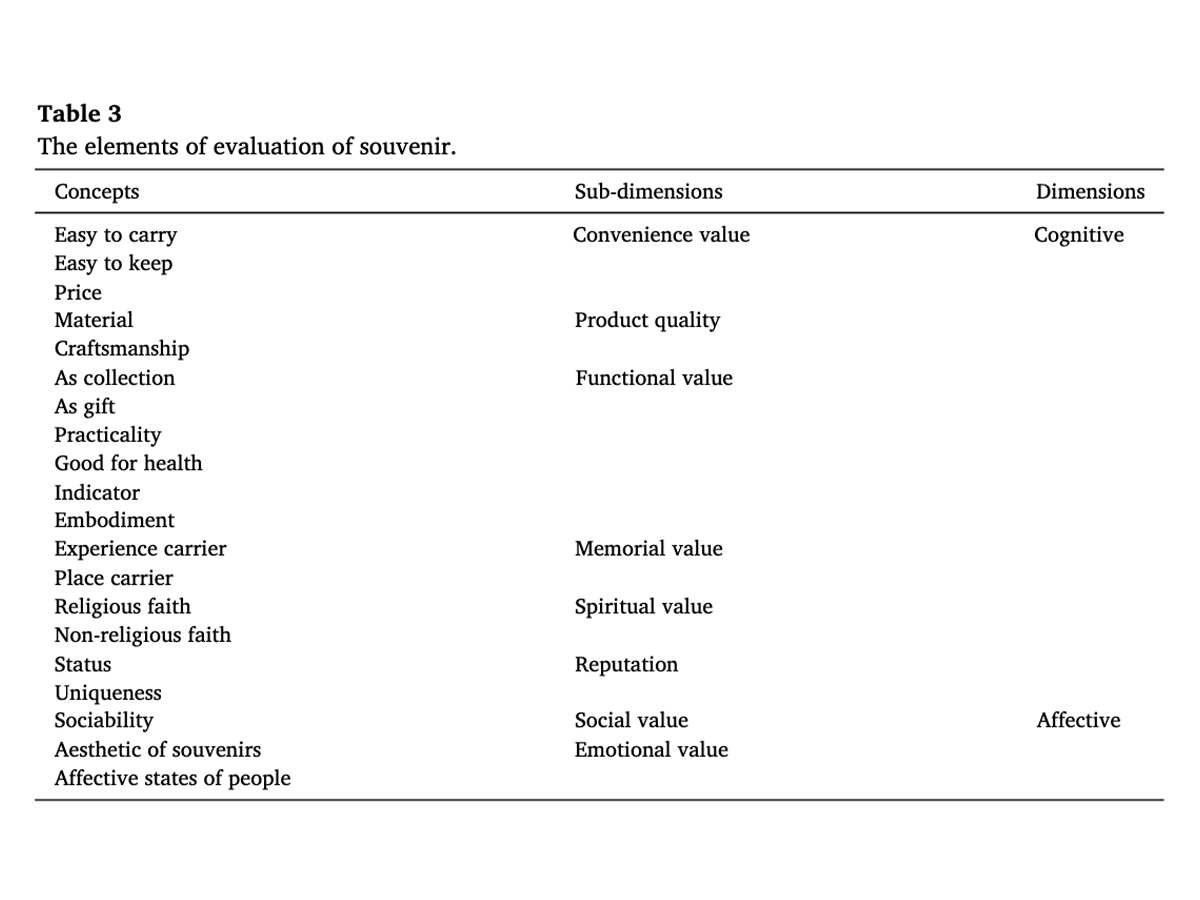

Souvenir Evaluation: The framework for evaluating the souvenir will be adapted from Zi Yan Duan et al.'s work, focusing on the meaning derived from bio-data instead of the typical associations with a physical place. To refine this, I'll also draw on Petrelli et al.'s guidelines for tangible data souvenirs, with an emphasis on how the souvenir invites personal reflection through its connection to the user's data.

Qualitative Insights: I'll gather qualitative feedback using semi-structured interviews, open-ended questions, and narrative elicitation techniques. These will help uncover participants' thoughts on the symbolic and reflective value of the artefacts, their overall experience during the interaction, and their level of satisfaction. Participants will also share impressions of the interface's visual appeal and how it influenced the perceived value of the souvenir.

Preparation (Week 3)

For the preparation, I would need:

| Category | Tasks |

|---|---|

| Users |

- Recruit target audience participants. - Share study purpose, data usage, and confidentiality details for consent (no form needed). |

| Space |

- Ensure a quiet, private, distraction-free environment. - Set up comfortable lighting and seating. |

| Prototypes | - Prepare and test all three prototypes to ensure they work as intended. |

| Feedback Materials |

- Create pre- and post-surveys (baseline info, impressions, satisfaction). - Adapt AttrakDiff and souvenir evaluation metrics. - Prepare a semi-structured interview guide. |

| Equipment |

- Provide necessary devices (laptops, tablets, etc.). - Use a recording device (audio/video) with consent. - Bring a notebook or laptop for real-time note-taking. |

| Data Collection Setup |

- Ensure proper logging of OSC/EEG/biofeedback data. - Save all outputs (e.g., printed artefacts) for later evaluation. |

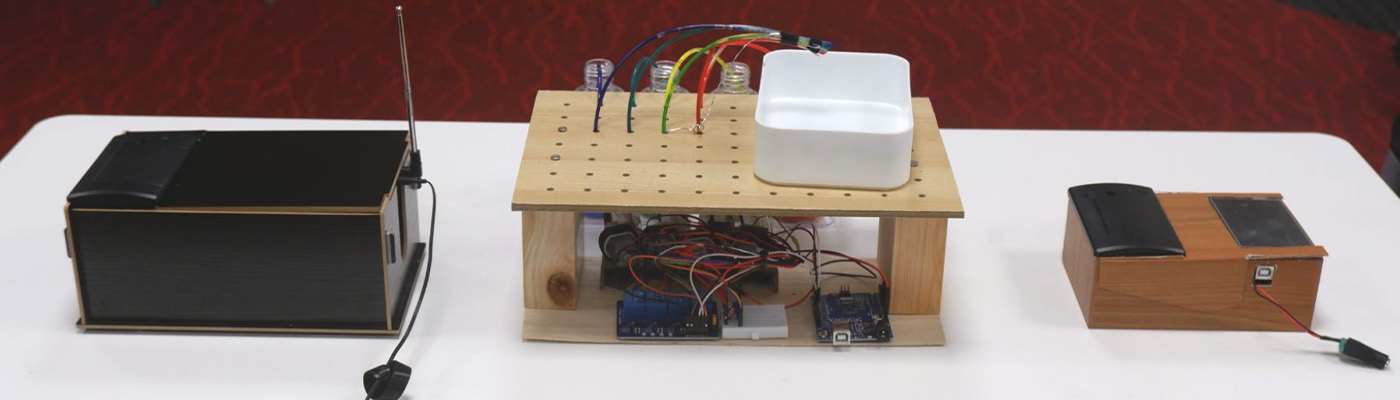

To prepare for user testing, I recruited 12 participants aged 20 to 25, all with design backgrounds. To keep the testing process manageable, I divided them into two groups of six and scheduled each group on a separate day. This approach made it easier to focus and give individual attention to each participant. For the testing space, the school offered library smartroom F405. Fortunately, all of the users from my cohort, and one user from a different school was lured in to help me with the user testing in exchange for dinner.

User Testing (Week 3)

The user testing ran across two days. The smartroom worked well for one-on-one interactions. Seven participants from my cohort, along with a graphic design student from another school who'd agreed to join in exchange for dinner—a small price for the extra perspective. The second session, held on Saturday, involved four working graphic designers, most of whom I'd connected with through mutual peers.

Each session began with a brief overview of the study's goals and how their data would be used. Formal consent forms weren't required, and I kept the tone conversational but clear, to make sure everyone felt comfortable before diving in. Participants then completed a short digital pre-survey on my iPad, which captured baseline demographics and their prior understanding / experiences with EEG. To avoid order bias, I randomised the sequence in which participants engaged with the three interfaces. Post-interaction, participants filled out the quantitative evaluation forms for the souvenir and the adapted AttrakDiff survey digitally.

Logistically, there were hiccups. On day one, the marble paste, though prepped Wednesday, became messy after one or two sessions, prompting me to refresh in between slots. A note to self to prepare multiple containers on day two so that the process was smoother. Time management was tight; sticking to 15-minute slots required a timer and gentle nudges, but realistically it was appropriate for controlled user testing.

Dream Journal (Evaluation)

The Dream Journal prototype received the highest ratings for Good (Average = 1.92) and Captivating (1.67), indicating that participants perceived it as the most engaging and well-executed among the three. However, it scored the lowest in Stylish (0.42) and Premium (-0.58), suggesting that while it was considered imaginative and engaging, it lacked a sense of refinement or high-quality aesthetics.

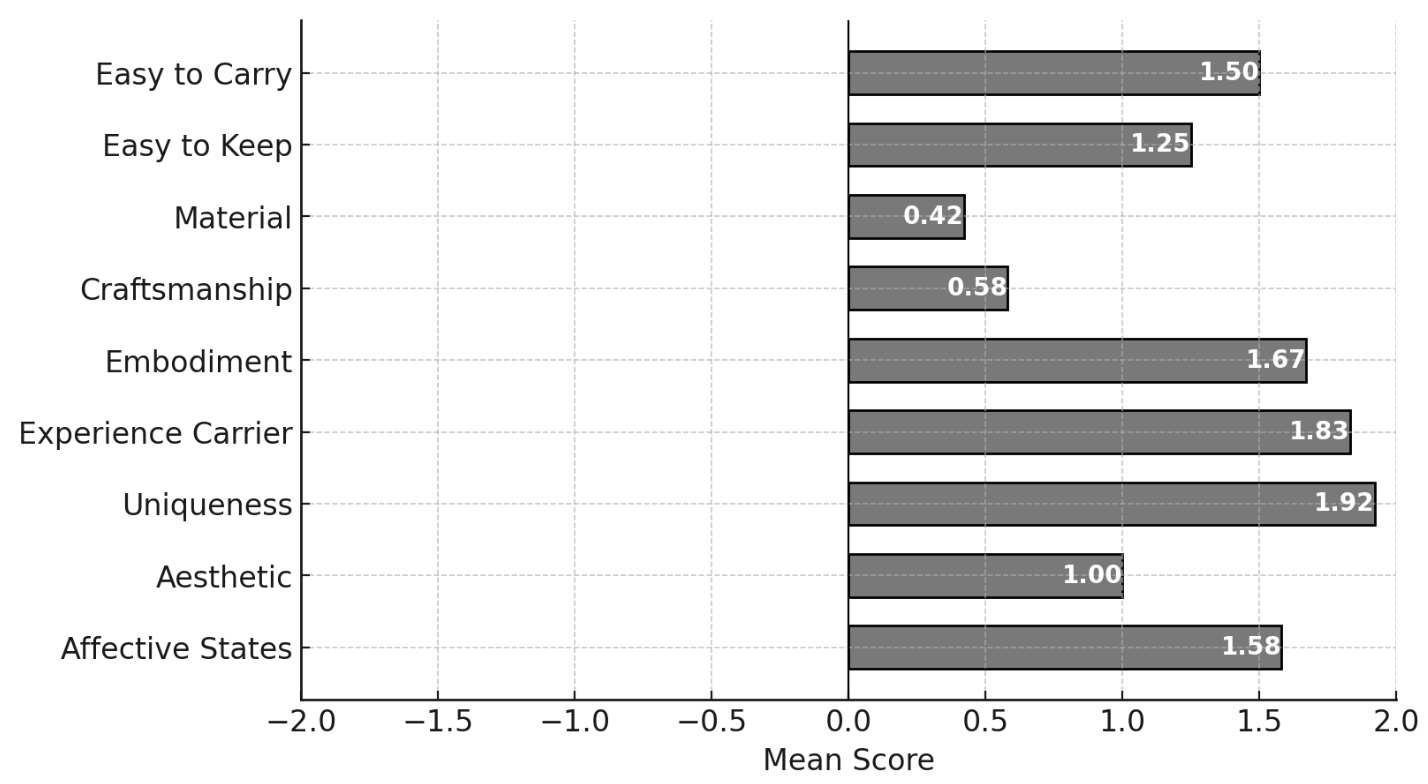

In the souvenir evaluation metric, Dream Journal was rated highest in embodiment (1.67) and experience carrier (1.83), indicating its effectiveness in representing a meaningful and memorable experience (refer to Appendix D). It also scored the highest for uniqueness (1.92) and had the strongest emotional impact (1.58), indicating that it resonated most with participants on an affective level. However, its aesthetic appeal (1.00) was lower than Wavelet, reinforcing its perceived lack of refinement.

During the follow-up interviews, an interesting observation emerged: six participants independently referred to the printed outcome as a "receipt," despite the term never being mentioned in the interface's title or introduction. This association stemmed from the material's familiarity and the idea that the output acts as a form of documentation. Many users drew parallels between the nonsensical text output and their own experiences of waking from a dream, linking the act of reading the printed text to the fragmented recollection of dreams upon waking.

In the pretesting survey, 9 out of 12 participants (75%) ranked Dream Journal as their top choice among the prototypes. However, after user testing, this preference decreased slightly to 7 participants (58.3%), as two users switched their preference to Wavelet. One participant explained this change, noting that while Dream Journal remained the most intriguing to read, they ultimately preferred Wavelet for its more straightforward, visually based output. They felt that it offered a better opportunity to compare their initial and subsequent responses when revisiting the interface.

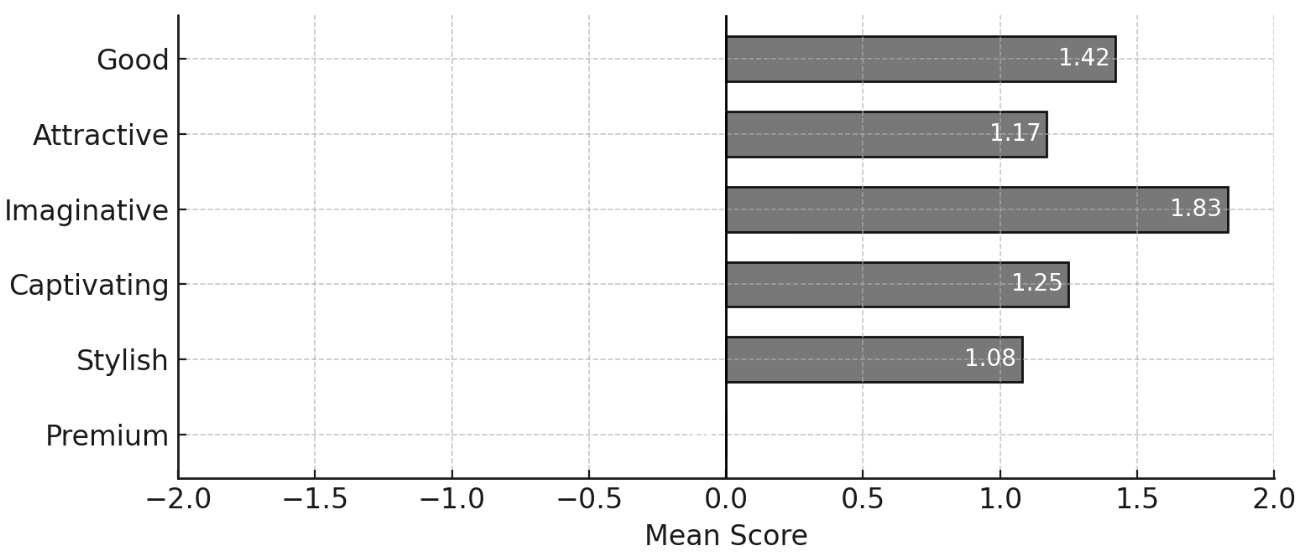

Frequent Encounters (Evaluation)

The Frequent Encounters prototype stood out in Imaginative (1.83), suggesting that participants found it to be the most creative. However, it received the lowest ratings for Attractive (1.17) and Premium (0.00), indicating that while it was perceived as conceptually interesting, it lacked strong visual appeal and did not convey a high-end feel.

In the souvenir evaluation, Frequent Encounters performed moderately well in portability and storage, with ratings similar to Dream Journal (1.5 for carrying and 1.25 for keeping). However, it was rated the lowest for craftsmanship (0.17) and aesthetic appeal (0.58), suggesting that participants found it less well-made and visually unrefined. Additionally, it was the least effective in embodying user experiences (1.08) compared to the other prototypes.

The follow-up interviews revealed a general consensus of confusion regarding the relationship between the radio interface and its printed outcome. Another participant summarised this perspective, stating that there was no "meaningful outcome to carry home." While the radio interface offered the most immediate real-time feedback through audio, it ultimately proved to be the least engaging overall.

However, the concept itself was relatively well received. One participant noted that although it was the least engaging of the three interfaces, it was the concept that intrigued them the most and would stay with them the longest.

Wavelet (Evaluation)

The Wavelet prototype performed relatively well in Good (1.67) and had the highest ratings for Attractive (1.5) and Premium (0.33). This suggests that it was perceived as visually appealing and well-designed, while also conveying a relatively higher quality compared to the others. The glass bottles holding the paint contributed to this impression, with one participant describing it as resembling "an old alchemy or chemistry experiment."

In the souvenir evaluation, Wavelet received the highest ratings for aesthetic appeal (1.67) and craftsmanship (1.17), suggesting that it was perceived as the most visually refined and well-constructed. However, it had the lowest ratings for material quality (0.33) and ease of keeping (0.83), indicating that it was considered slightly less convenient to store.

The follow-up interviews revealed a consensus that Wavelet was the most engaging in terms of interaction. While some users of the other prototypes shifted between meditative states and active observation, participants engaging with Wavelet were more likely to remain consistently in one state, mostly actively observing the interface's operation. One participant remarked, "I like that I can see the paint in real time—how it moves and spreads. It's like a physical bar graph, and at the end, I get a nice little painting. Sometimes I try to predict the colour of the next droplet, but another colour appears first, which makes it interesting to observe."

Another participant called the resulting souvenir a more physical version of real-time data visualisation, stating that watching the paint flow felt more engaging than the screen-based visualisation in Dream Journal. This aligned with guidelines from Zi Yan Duan's study on tangible data souvenirs, which found that when the real-time production of an output was visible, participants were more inclined to keep the resulting souvenir.

Wavelet had a slight positive shift between the pre-testing and post-testing surveys. As mentioned in the Dream Journal interview results, this was due to the fact that some participants, after experiencing the interfaces, wanted to compare their initial expectations with a more straightforward, visual-based outcome, leading them to shift their preference to Wavelet.