05

Making with EEG:

3 Tasks in Progress

09.09.2024 ~ 15.9.2024

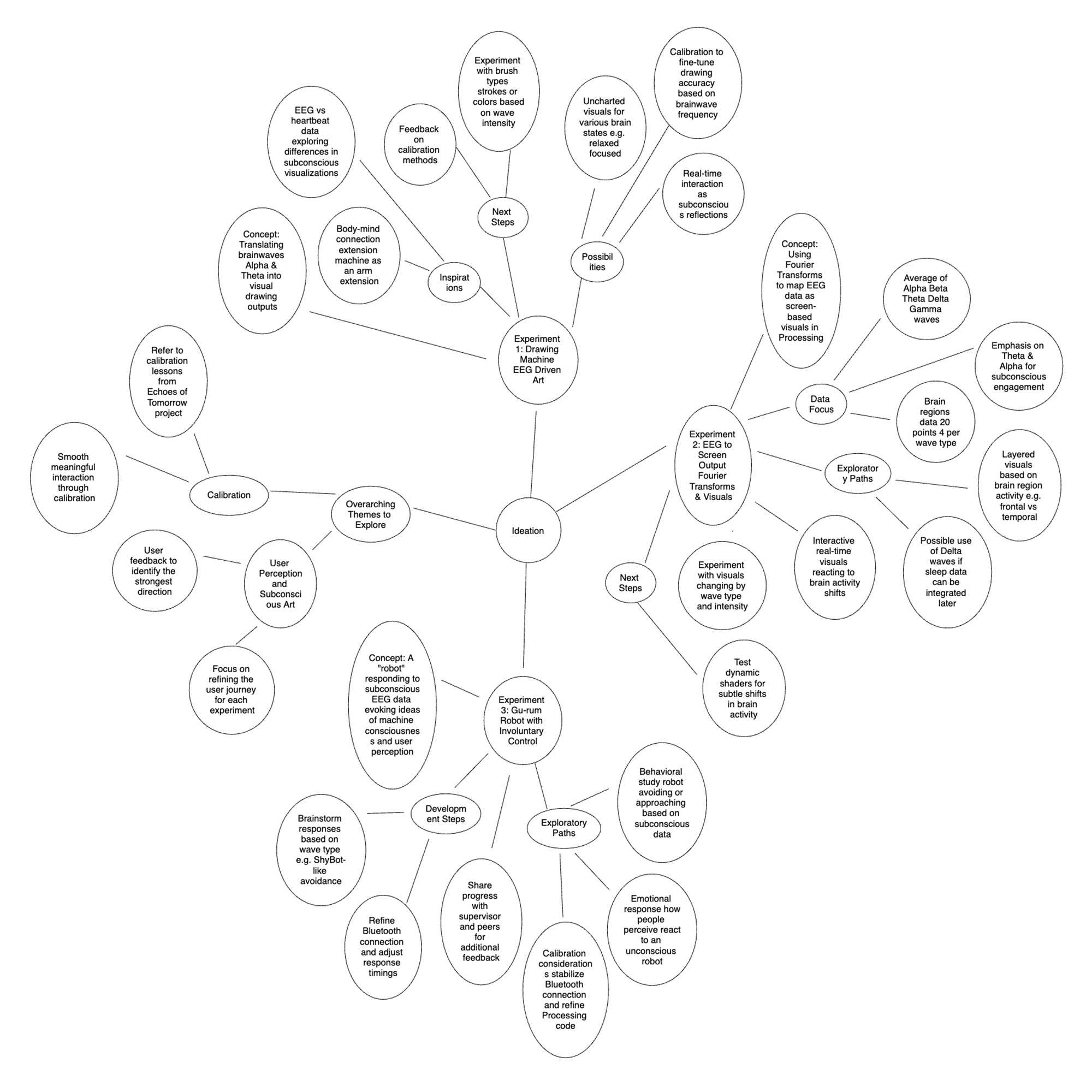

Ideation for Physical Making

This week, I was quite busy with another project, so I wasn’t able to dedicate as much time to my experiments as I had planned. However, I did make some progress with the ideation for future tasks, and the technical setup of three different experiments, which I’ll fully dive into next week. Here’s a brief overview of where things stand.

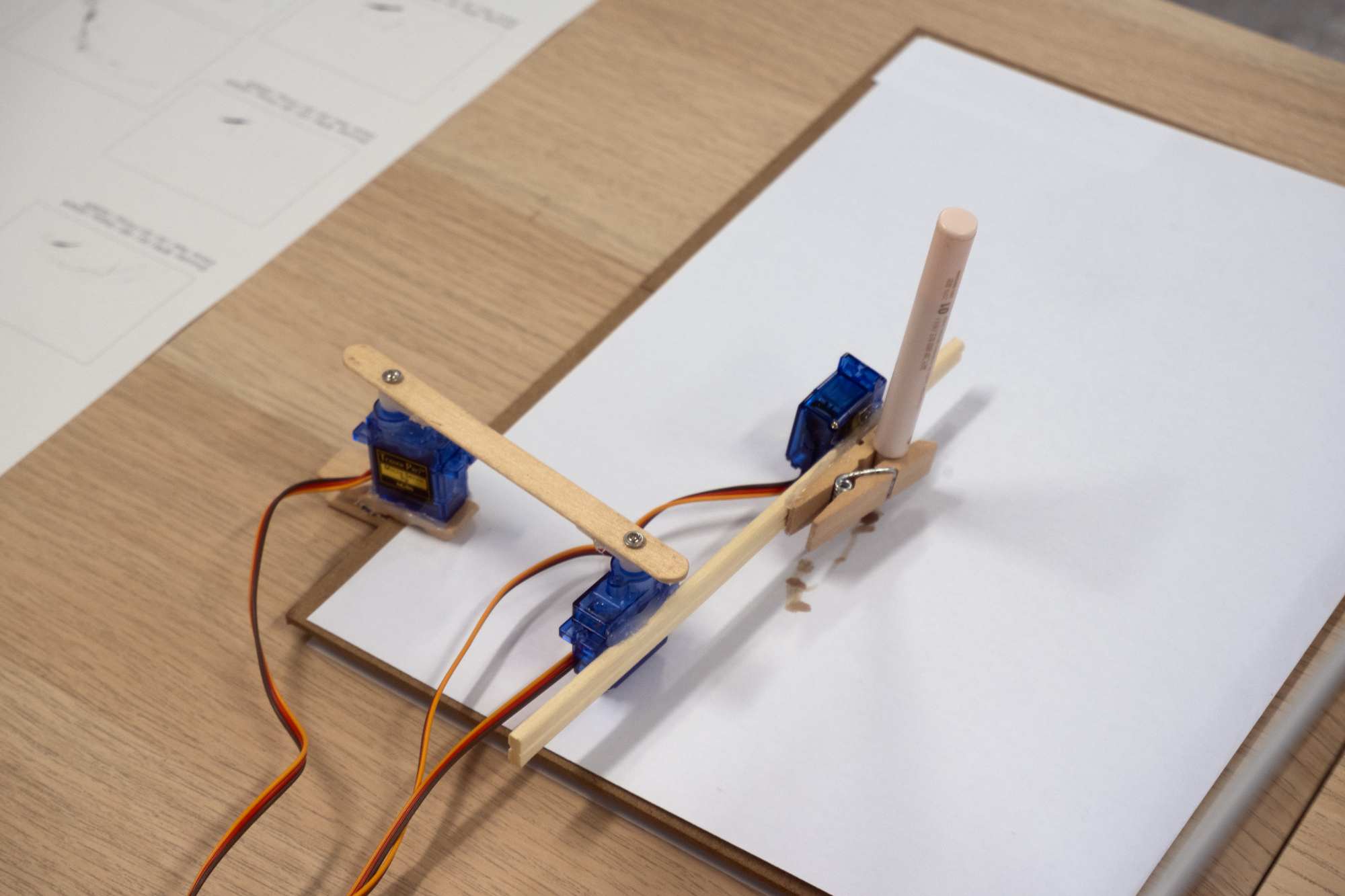

Experiment 1: Drawing Machine

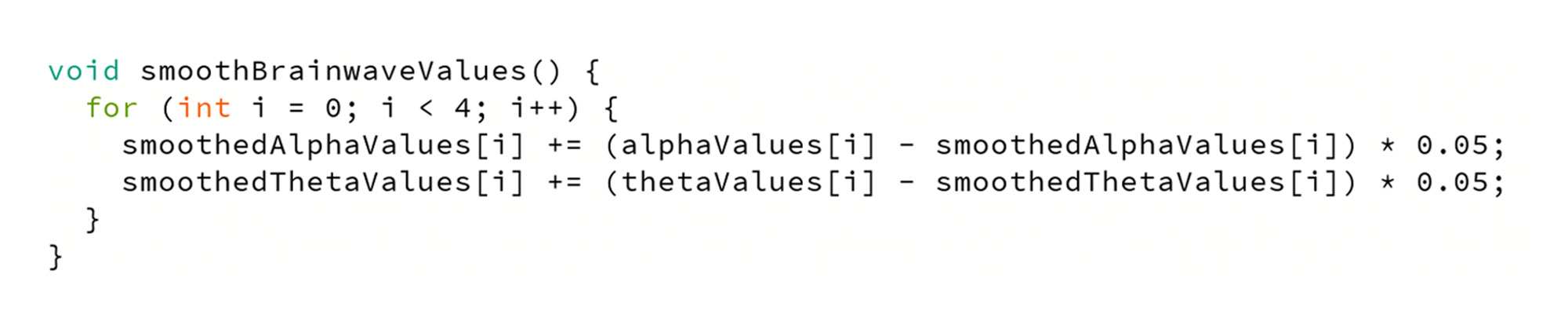

I’m continuing my previous work, where I originally used heartbeat data to drive a drawing machine. This time, I plan to expand by using EEG data instead of pulse. The machine will essentially translate brainwaves (specifically Alpha and Theta waves) into visual outputs, making the drawing process a reflection of my subconscious mind.

I see this as an extension of the body-mind connection—almost as if the machine itself becomes an extension of my arm. This week, I was able to successfully connect the EEG device and receive data, but I haven’t yet fully calibrated it. Calibration will be the main focus in the next phase.

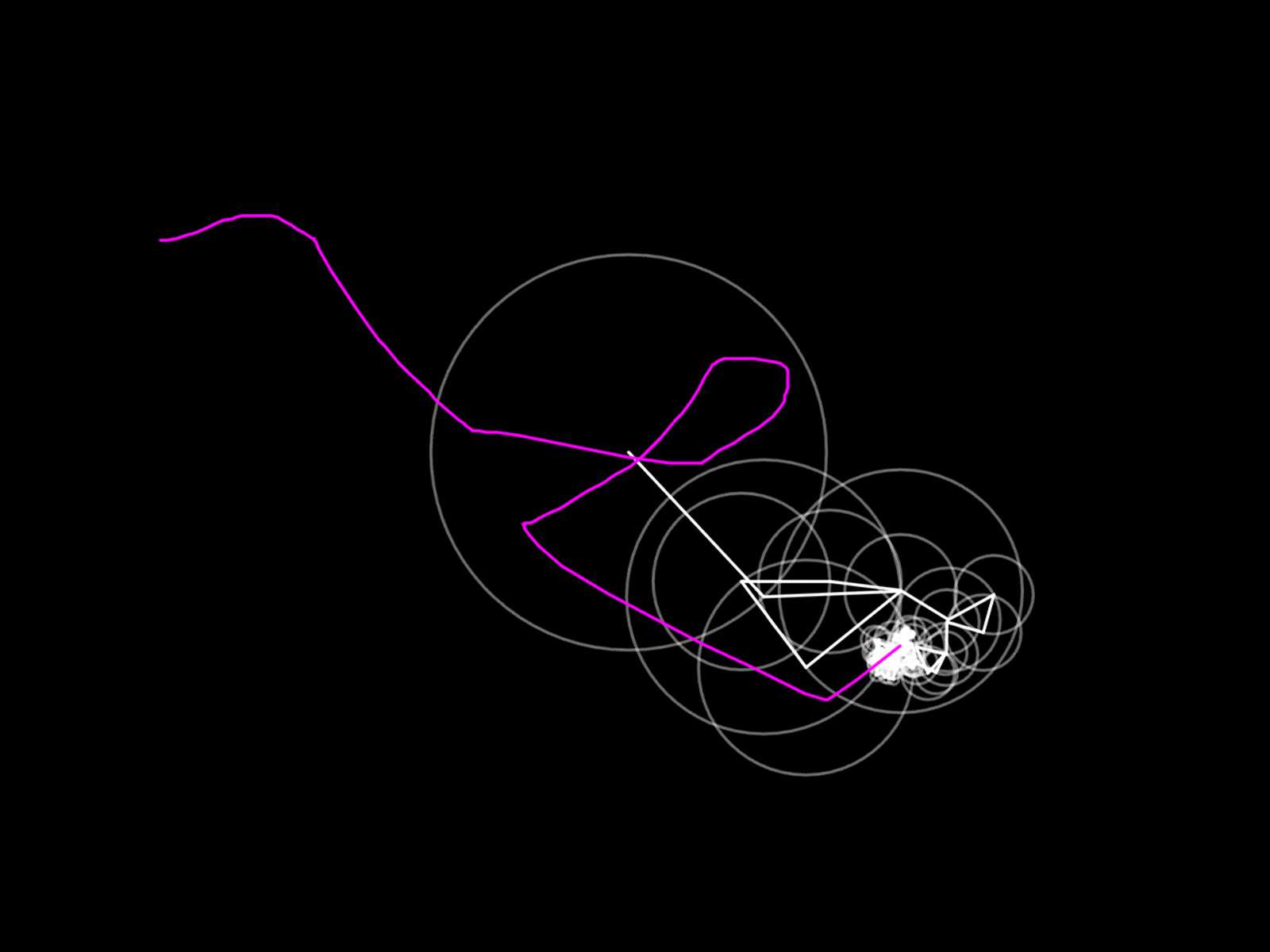

Experiment 2: EEG to Screen Output

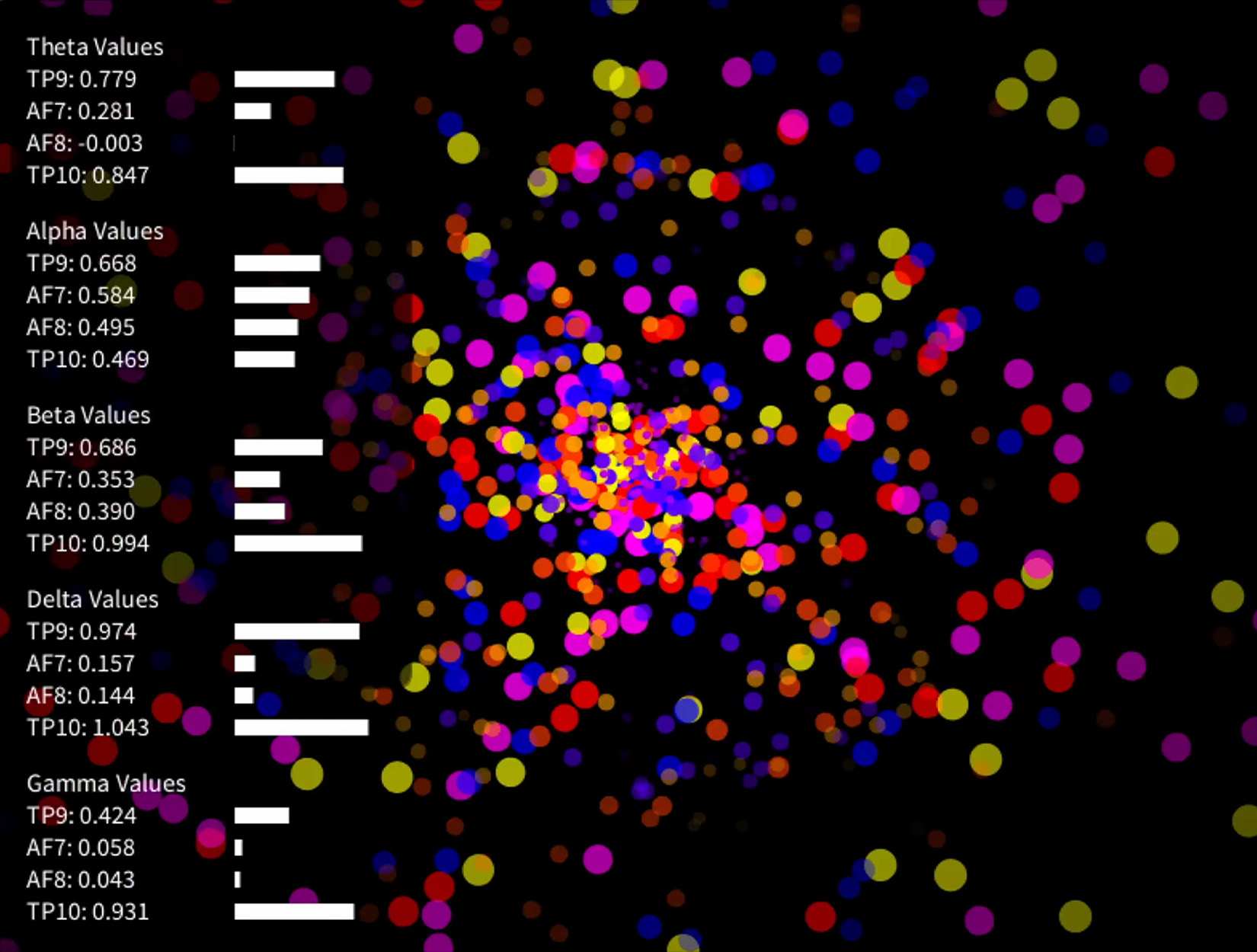

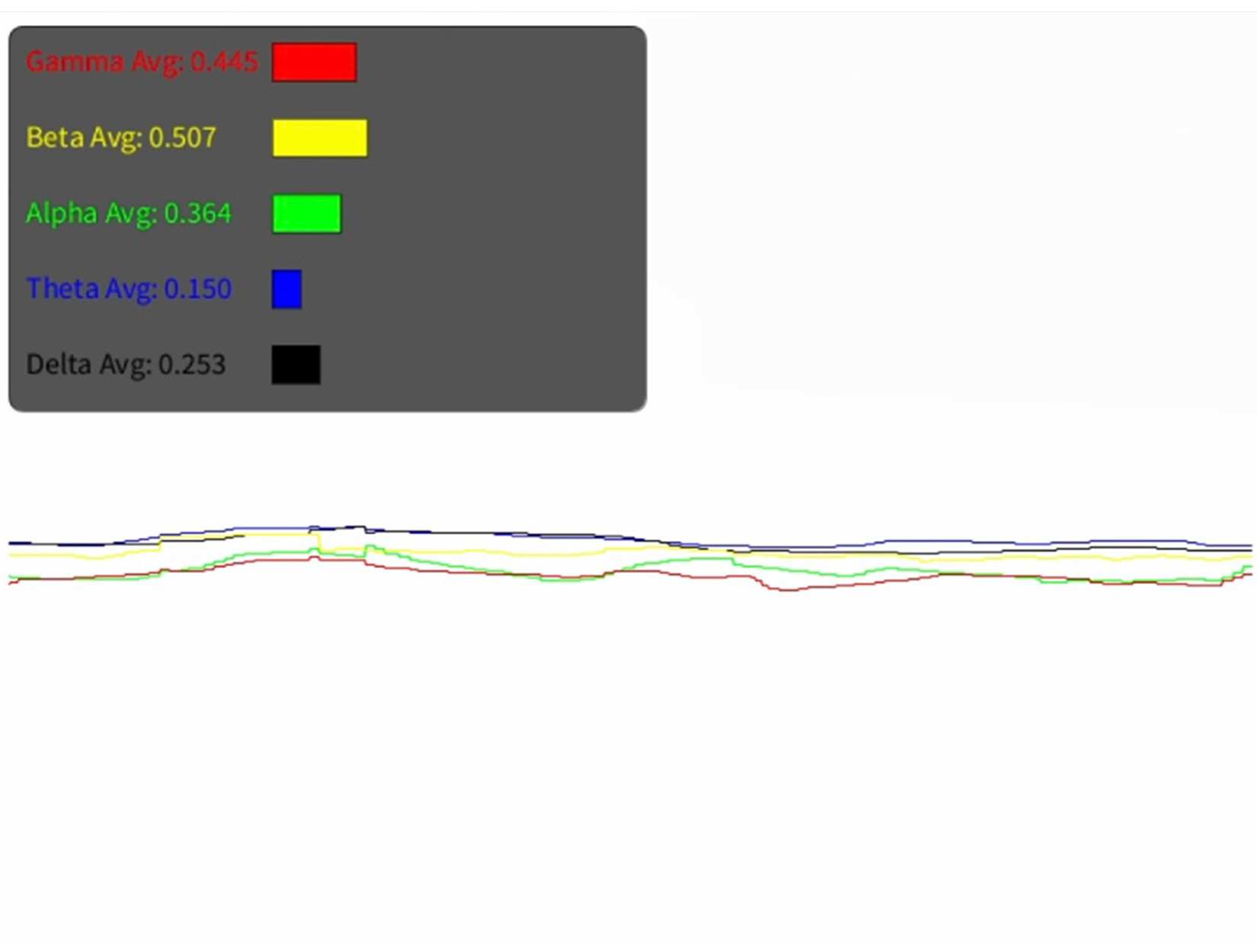

This experiment involves visualizing brainwave data using Fourier transforms in Processing. I’ve been testing how I can extract the necessary data from my Muse EEG device, and after some troubleshooting, I’ve figured out how to pull both raw and processed data (such as averages of brainwave types or data from specific parts of the brain). I’ll be working with three main sets of data:

- The average of the five main brainwave types (Alpha, Beta, Theta, Delta, and Gamma).

- Absolute data from different brain regions, giving me a total of 20 data points (four for each brainwave type).

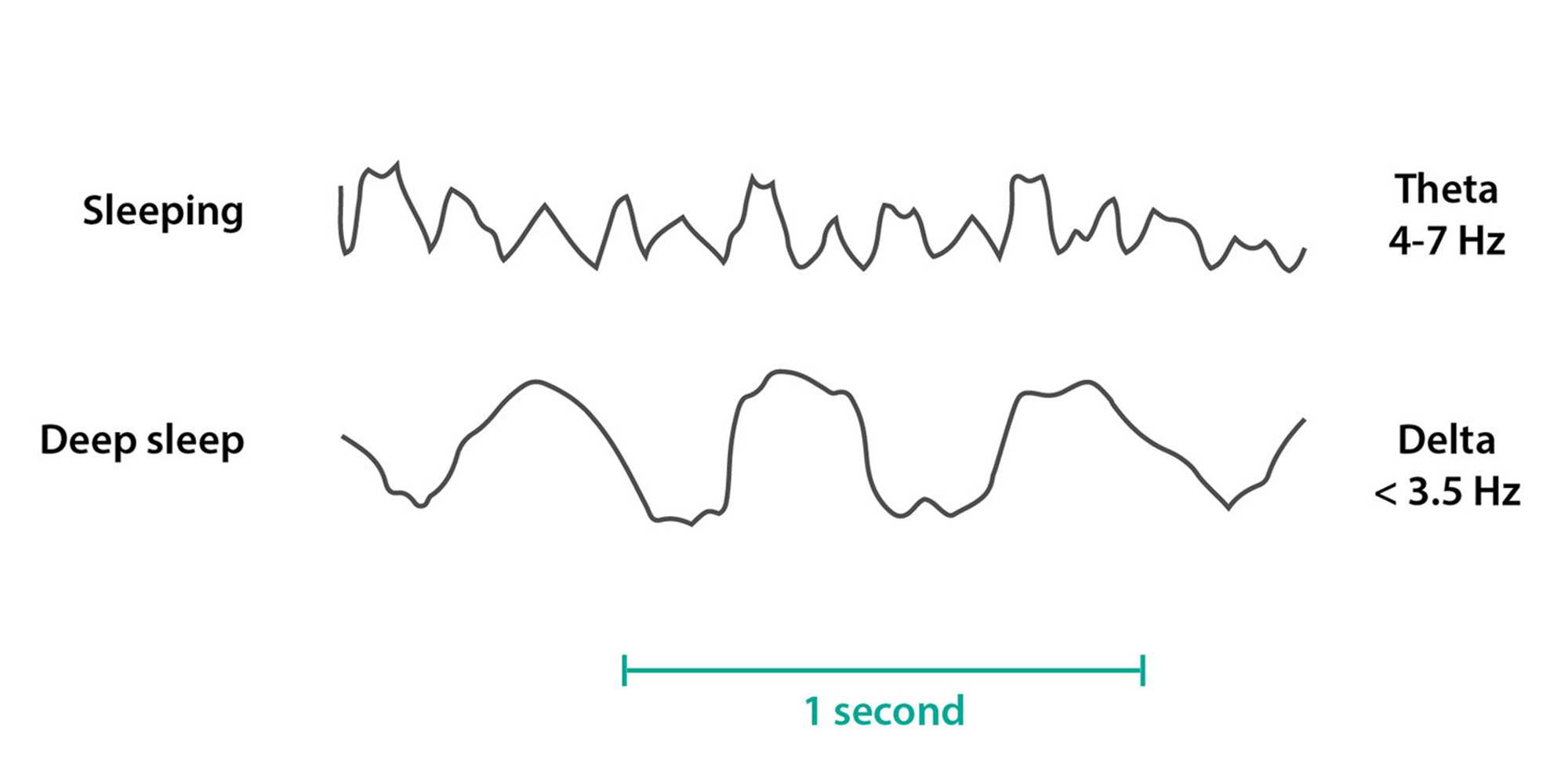

- A focus on Theta and Alpha waves, which tie more directly into my theme of subconscious exploration.

I originally considered using Delta waves (since they’re linked to deep sleep), but given my limited resources and time, it would be challenging to do user testing on different people without involving long-term data collection during sleep, which I’m not equipped to do right now. Next week, I’ll start experimenting with different visual outputs using these datasets.

The goal is to create a highly interactive, real-time visual experience that responds to subtle shifts in brain activity, adding another layer of complexity to the relationship between mind and visuals. I haven’t had much time to explore this yet, but I’m excited to push this idea further next week.

In this experiment, I’m using Theta and Alpha waves to control dynamic shaders in Processing. The interesting twist is that I’m incorporating data from different regions of the brain—the frontal and temporal lobes—so the visual outputs will change depending on where the brain activity is happening.

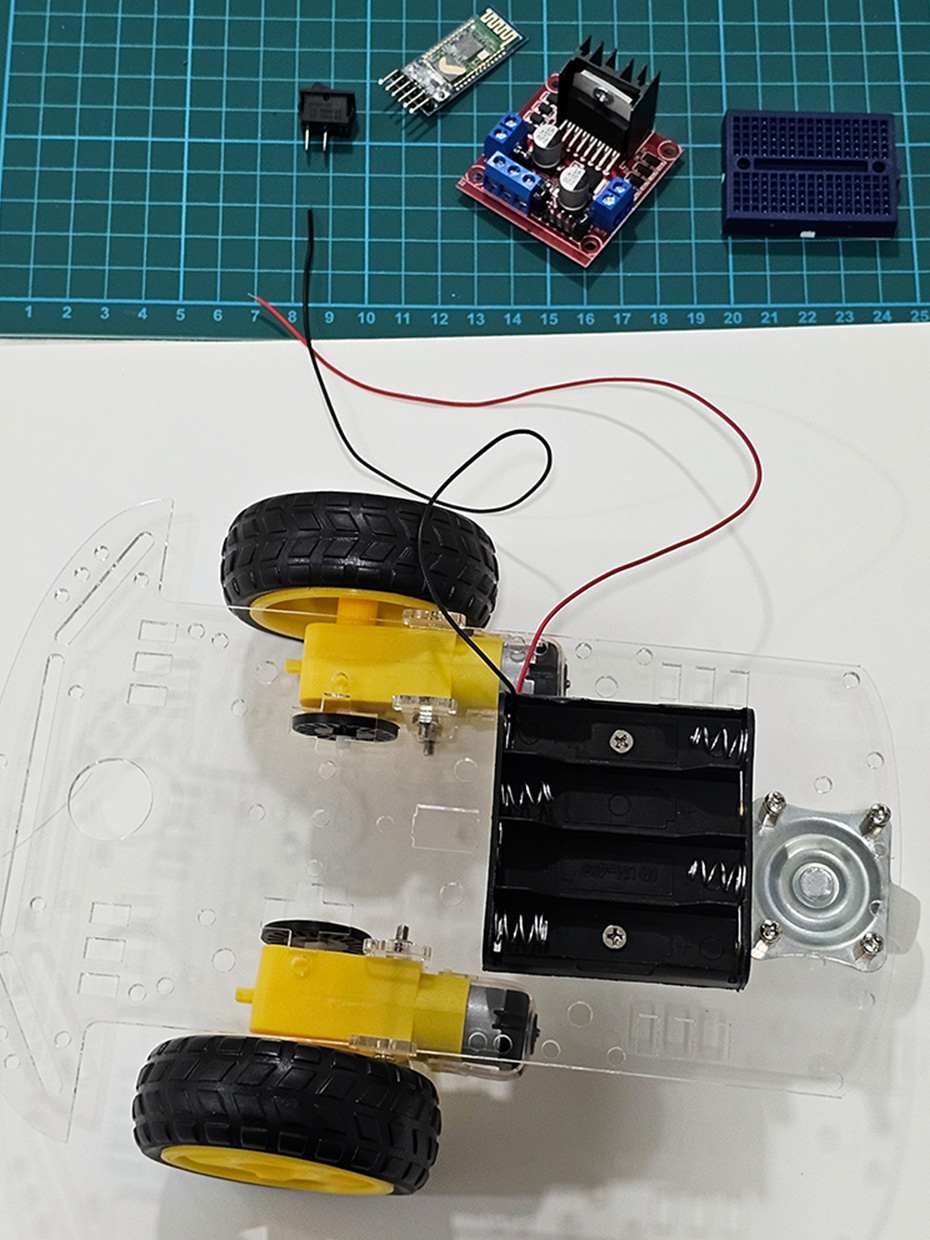

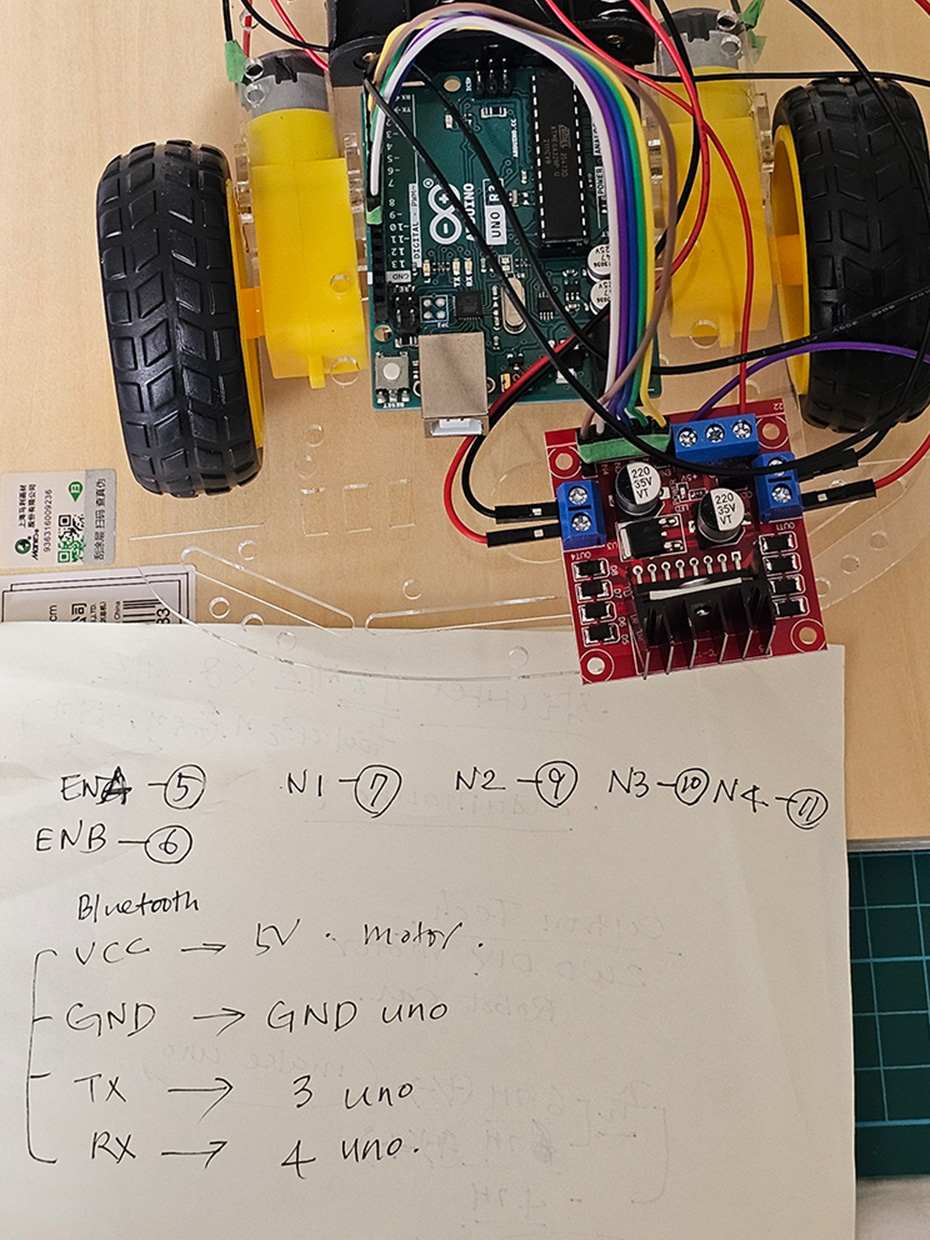

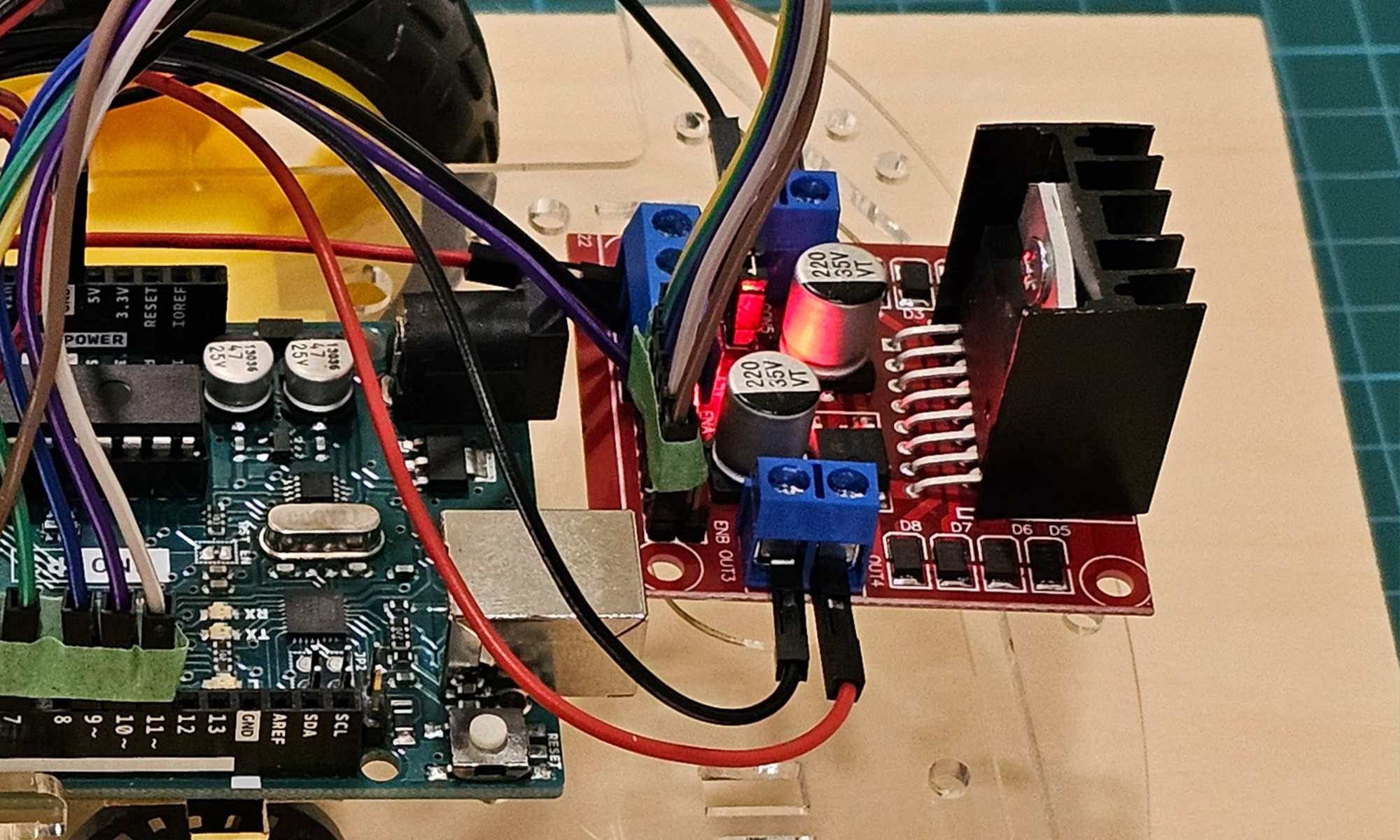

Experiment 3: Gu-rum

This is a completely new direction for me. Rather than focusing on whether machines can gain consciousness, I’m more interested in how people perceive machine consciousness. The idea is to create a robot that reacts to my brainwaves in an involuntary way, similar to how ShyBot avoids people when they approach. It’s an experiment in how people respond emotionally to a robot that is driven by subconscious, involuntary brain activity.

Although I didn’t complete the technical setup for this experiment, I did begin testing the Bluetooth module. However, I encountered difficulties getting a stable connection between the Arduino and the Processing output. I was able to send basic commands to the robot, but it only responded to the first command and ignored subsequent ones. I’ll need to refine both the code and hardware connections to make this work.

The current name of the car is Gu-rum. It means cloud in korean, and is the name of my childhood dog. I just wanted a name that I could feel attached to while experimenting. Also, I can make a dad-joke with this name. The car goes gu-rum, gu-rum!

Considerations

Even though I didn’t manage to complete the technical setup this week, I now have insights for the calibration process, something I hadn’t fully considered before. I realised from working on my Echoes of Tomorrow exhibition that calibration is essential for ensuring smooth user interaction, especially in interactive art. While I often prioritise the narrative, calibration plays a huge role in the overall user experience, so I’ll be focusing on that moving forward.

Next week, I’ll also be sharing my progress with my supervisor, Andreas, and seeking feedback from peers to determine which direction I should prioritise. I’m looking forward to refining the interaction in all of these projects and seeing which one develops into the most compelling outcome.

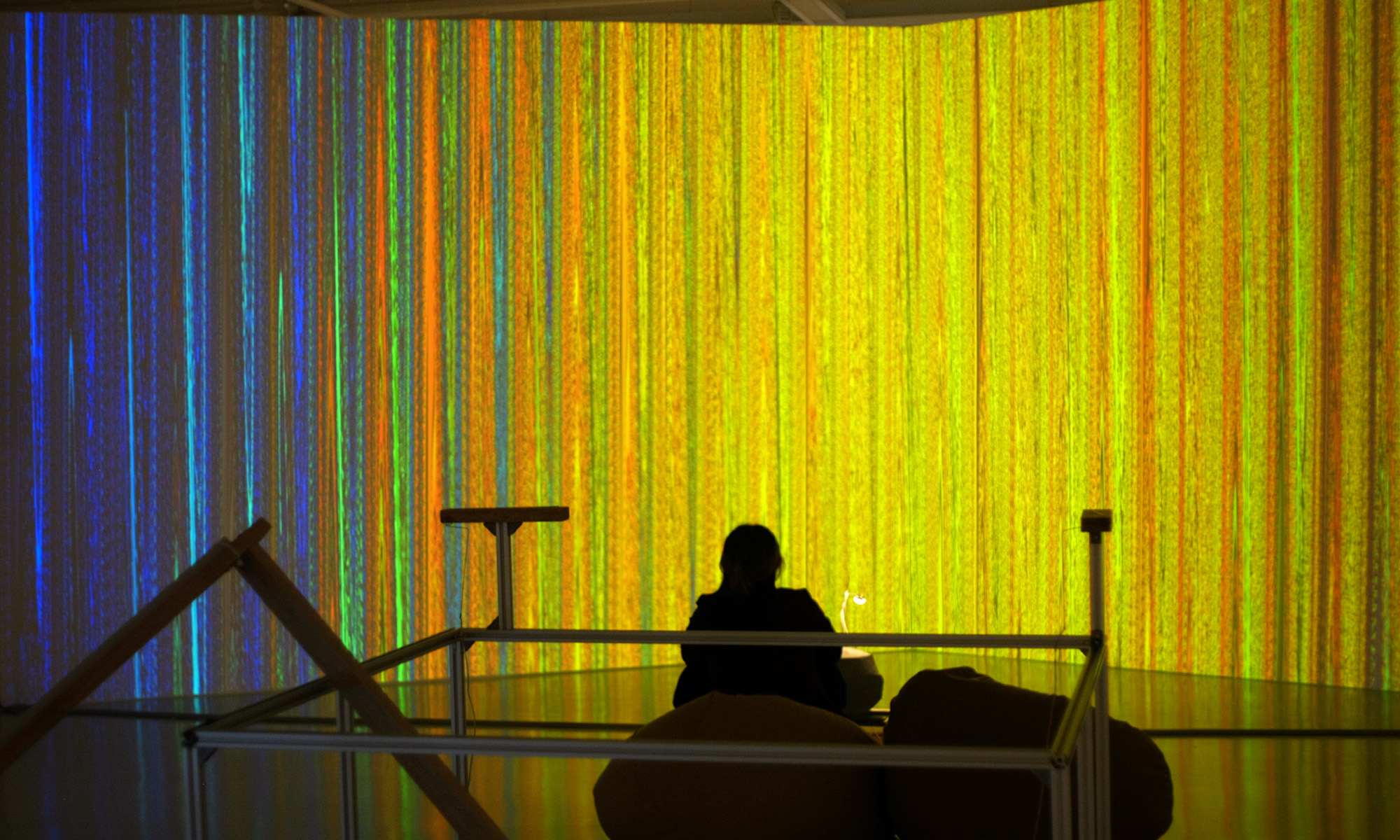

Echoes for Tomorrow

Since I mentioned it above, I will write a brief description of what I did for the exhibition, "Echoes for Tomorrow", a separate exhibition project under my supervisor, Andreas. The exhibition was a dialogue between technology and culture, and my main role was to create interactive visuals with Processing, and crafting an interface with light sensors. The main goal was to make the interaction intuitive, simple yet engaging. Avoiding sound and complex interfaces, letting the interaction itself do the talking.

One thing I learned about designing an interactive experience, which I would like to embed in my current project, is on balancing user experience and conceptual integrity. In the past, I had always thought that I had to ‘compromise’ the narrativity and concept for Interactivity and user experience, which is why I disliked interactive installations. However, I've come to realise there is an illusion that users create themselves while interacting with an installation. They bestow a subjective illusion of a narrative, such as intentionality or a relationship during the interaction. The narrative isn't compromised by user experience; rather, these elements are a combined into a synergic effect.