04

EEG:

Arrival of the MUSE Headset

02.09.2024 ~ 08.09.2024

Rants

Before introducing this week’s activities, I would like to document some of my thoughts from my research on recent developments of brain controlled devices, since I have conclusively decided that my project will revolve around brain activity, and more specifically, EEGs. In fact, my headband is due to arrive this week.

The idea of merging thought with machine once seemed like a distant vision. Yet today, we’re edging closer to that reality. Take Elon Musk's Neuralink, introduced in 2017. A coin-sized device implanted in the brain that translates brainwaves into commands. This technology is no longer confined to the realm of fiction.

I keep asking myself, what would it mean to think and see the world reshape itself based on the subtle movement of neurons? My first thought is immortality through mind uploading, just as the synospis of World of Tomorrow (2015), my favourite short film. What would it mean if my ‘consciousness’ could be uploaded to a machine as data?

Would this be considered as immortality? Would I still be myself, or would that new digital existence be a shadow, an imitation of the person I once was? The sensation of earth beneath my feet, these momentary, physical experiences "ground me". In a digital setting, without a body to process these sensations, what is left of me?

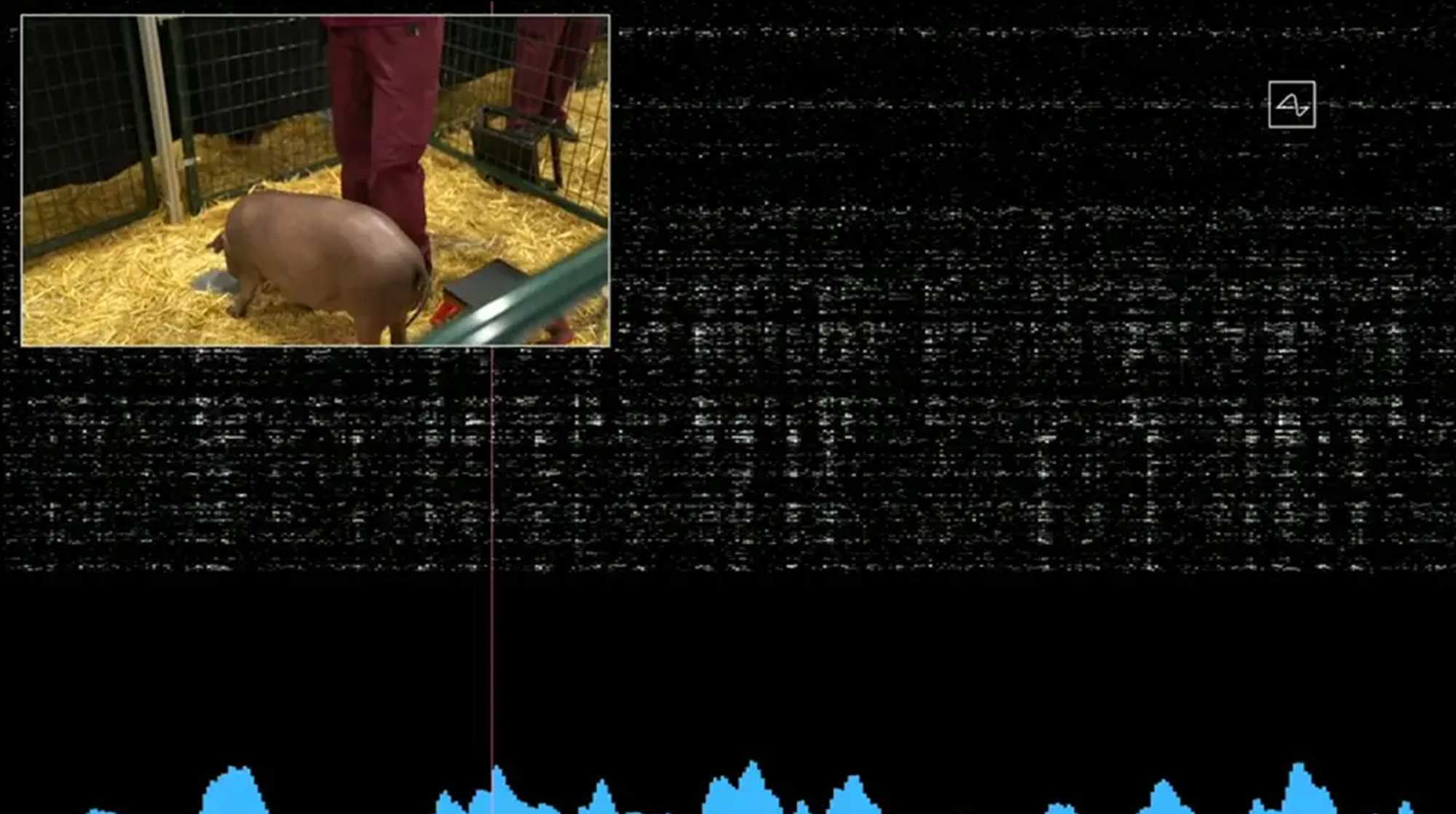

The Pig on a Treadmill

I have read an article that I would like to mention about here. Neuralink, in their quest to develop brain-machine interfaces, implanted chips in pigs' brains to measure and predict their limb movements. The pig would run on a treadmill, while Neuralink could accurately predict the pig's movements based on its neural signals.

Noland Arbaugh, Neuralink

User

wired.com/story/neuralink-first-patient-interview-noland-arbaugh-elon-musk/

Noland Arbaugh, Neuralink

User

wired.com/story/neuralink-first-patient-interview-noland-arbaugh-elon-musk/

The main goal is to help people with various disorders, such as those with spinal cord injuries. Children who have lost limbs due to accidents could soon control prosthetics simply by thinking. Now this is an incredibly fascinating yet worrying development for many. We could navigate the world without lifting a hand, yet lose the very thing that makes us human. The connection between thought and touch, mind and body. Is this the future we are ready for? It is vast and irreversible.

The boundaries between human and machine blur as technologies for brain-controlled prosthetics advance. If we begin to enhance not just our bodies but our minds, where do we draw the line? Enhanced memory, cognition, physicality. These are no longer within the domains of fiction but of ethics. Should the child who uses a prosthetic limb compete in the same race as a child without one? Should someone with an enhanced brain take the same test as someone without?

The Olympic of Cyborgs

As the 2024 Paris olympics is coming to an end, there is another case study that I am reminded of. The Cybathlon of 2016, where the participants could controll machines with their thoughts. At the time when I saw this, it felt like a rehearsal for a future we can barely comprehend.

And yet, despite these questions, I am drawn to the idea of transformation. Maybe it is in our nature to push forward, to see how far we can go, to transcend what we are and what we know. But will we lose ourselves in the process? Will we dream when we are machines?

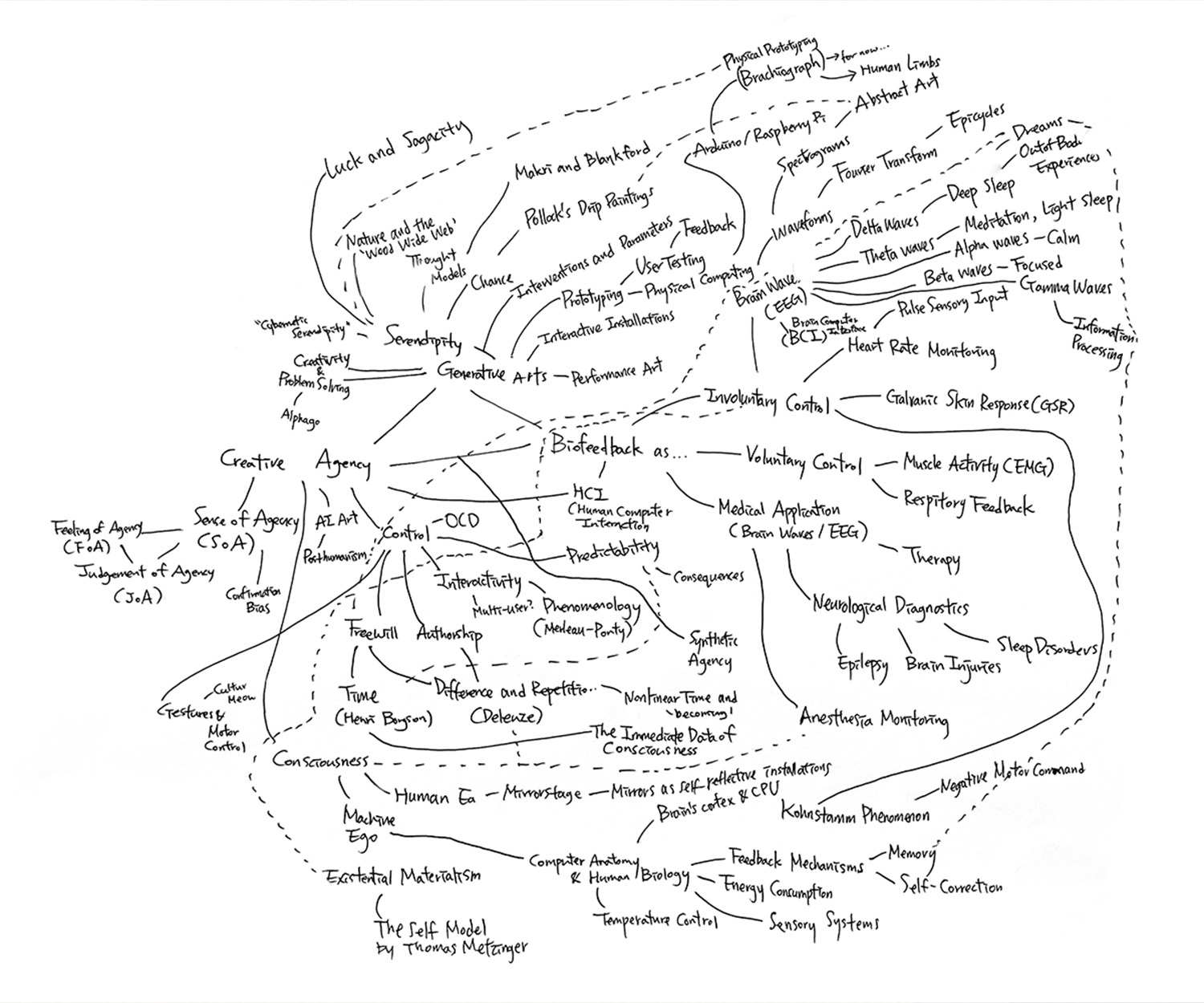

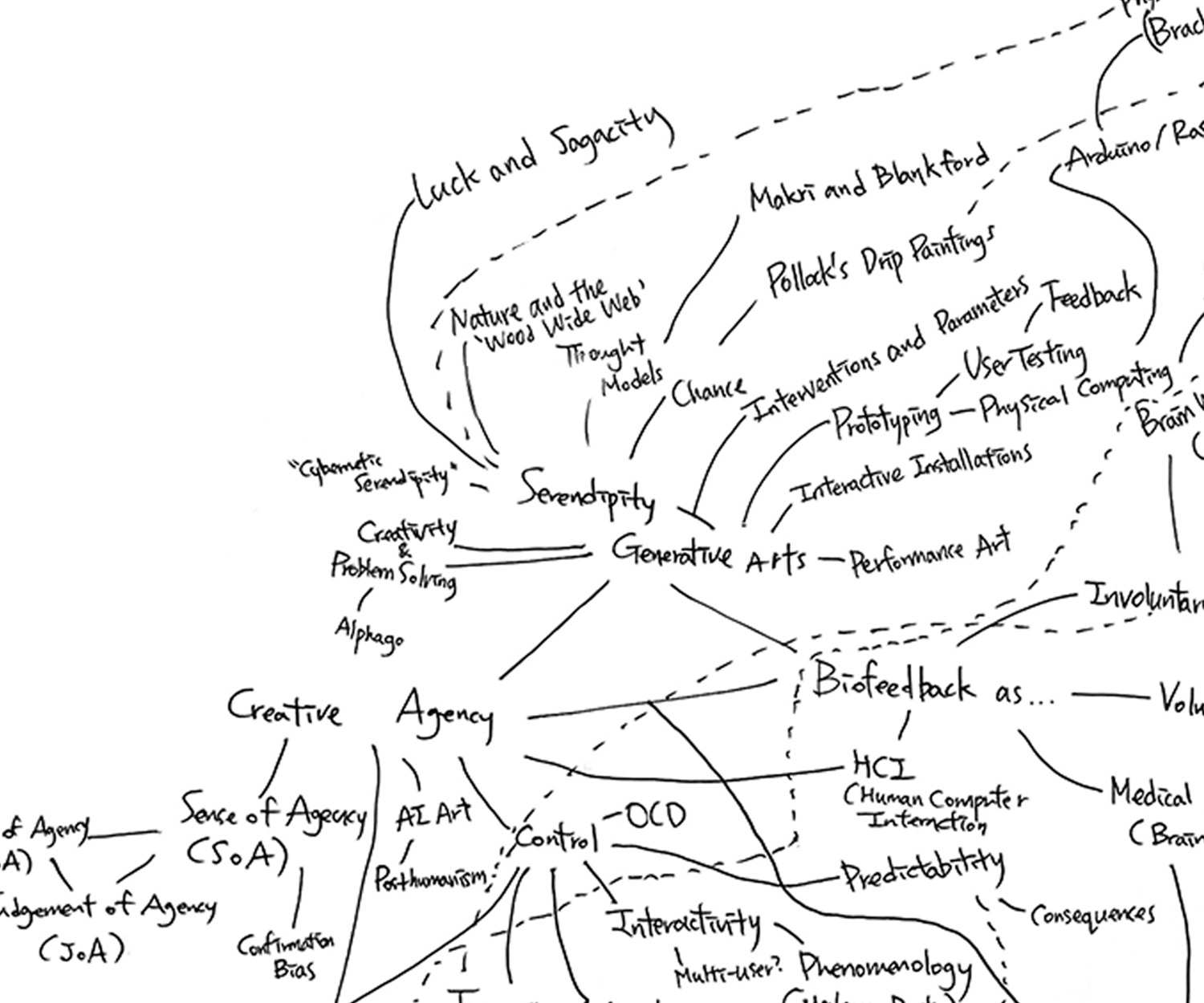

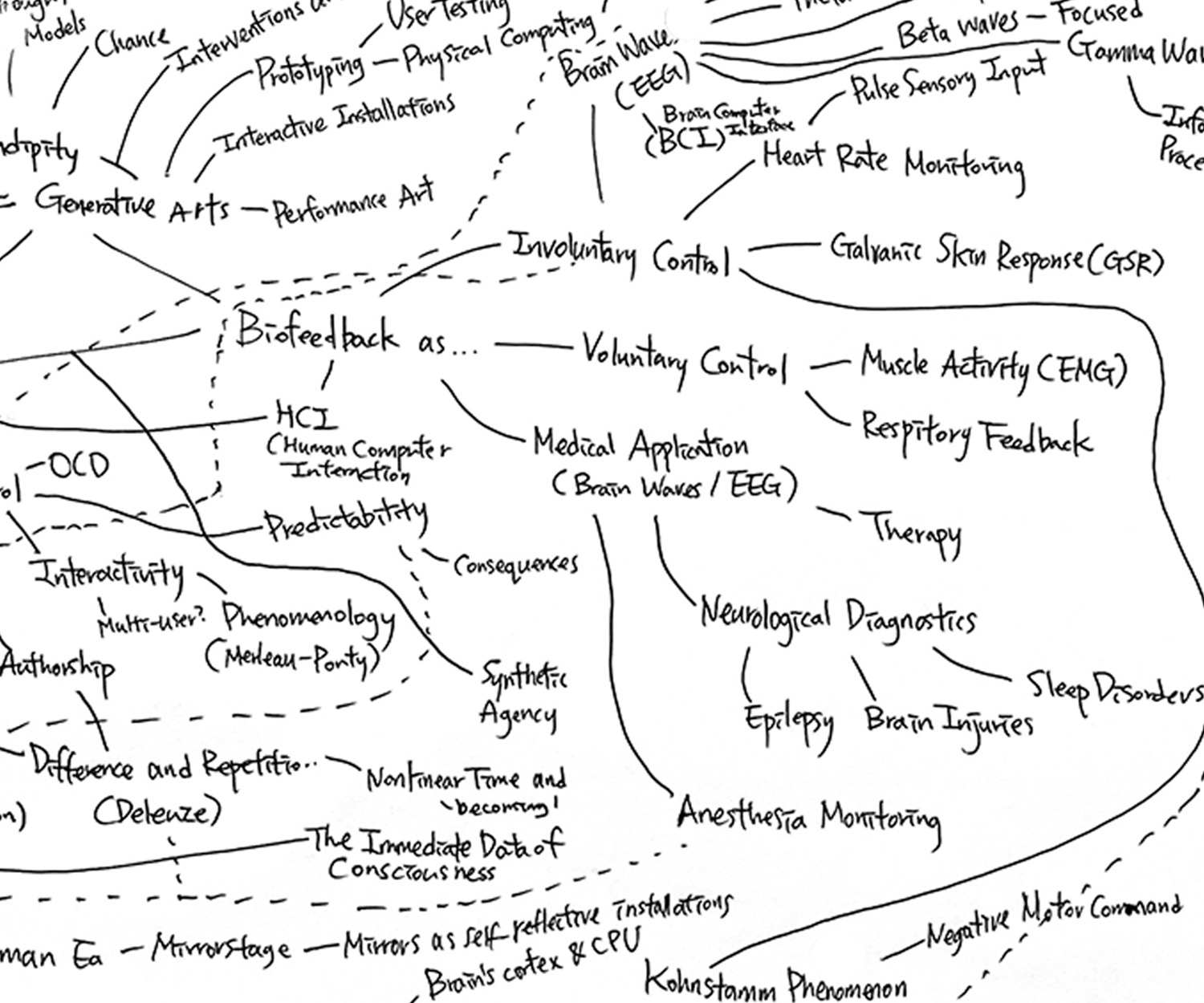

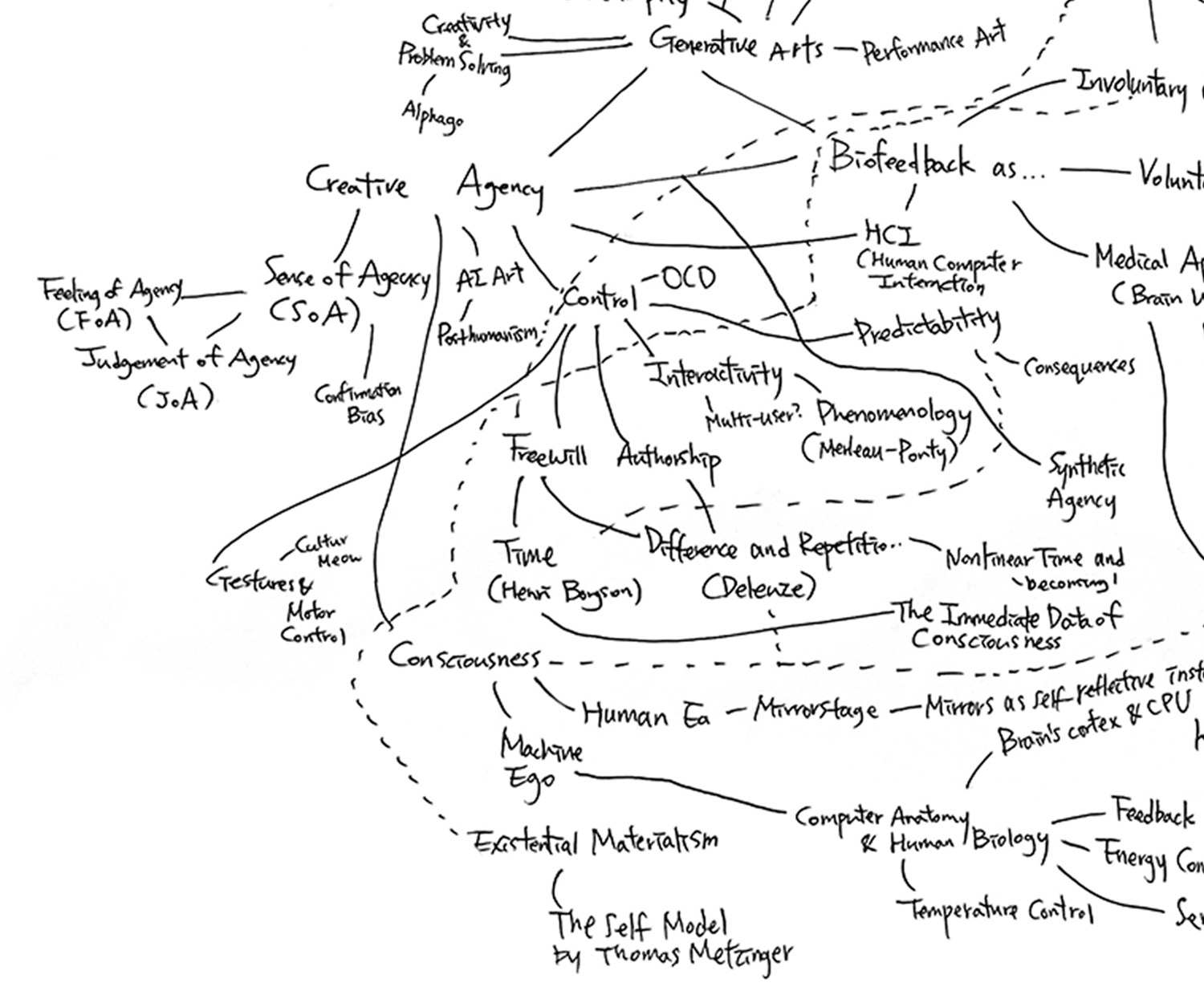

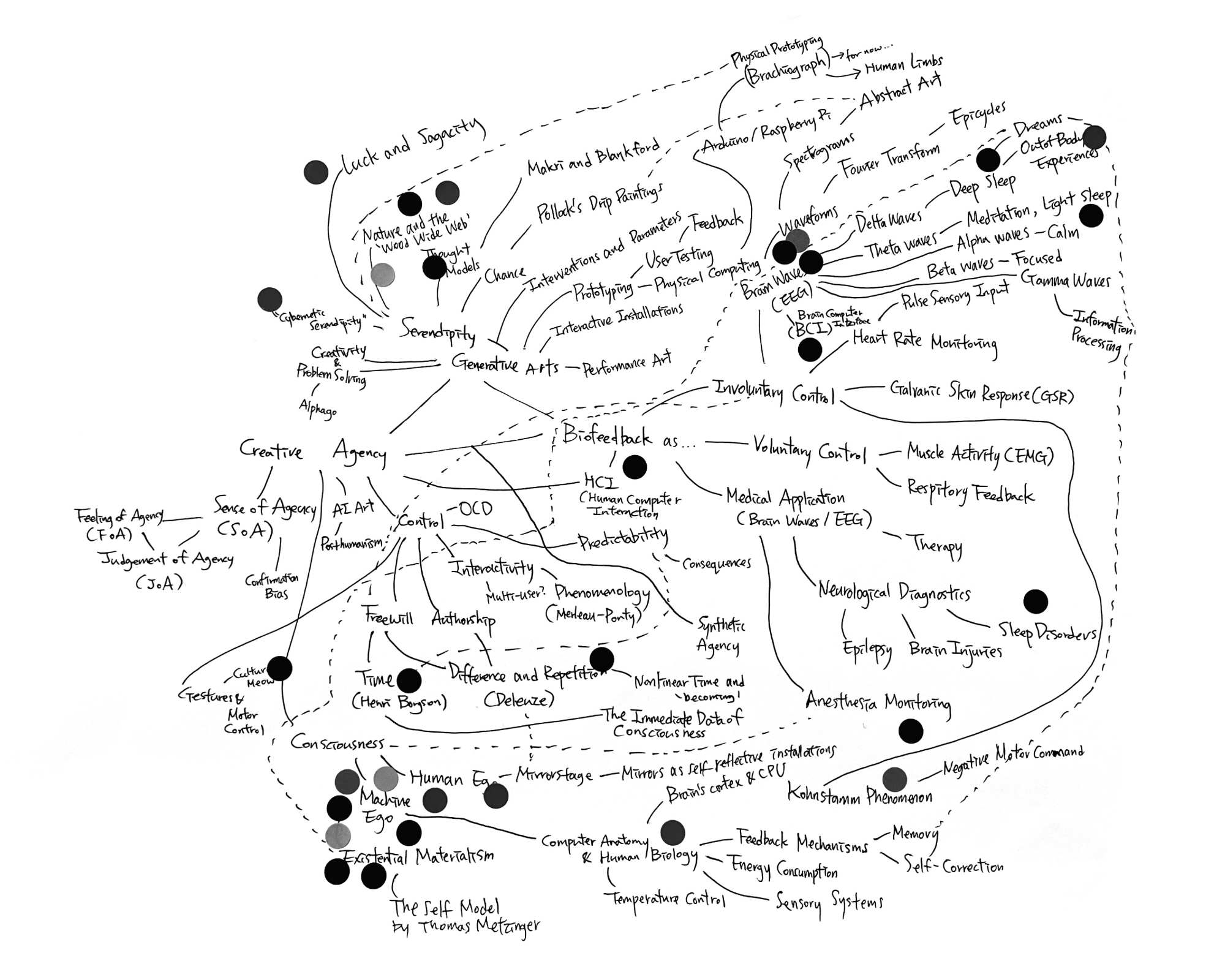

Mindmap

This week, we did an exercise where we made a mindmap of our directions and current research outcomes. At the core of my thinking are three themes: Serendipity, Biofeedback, and Creative Agency, which guide much of what I’m exploring at the current moment.

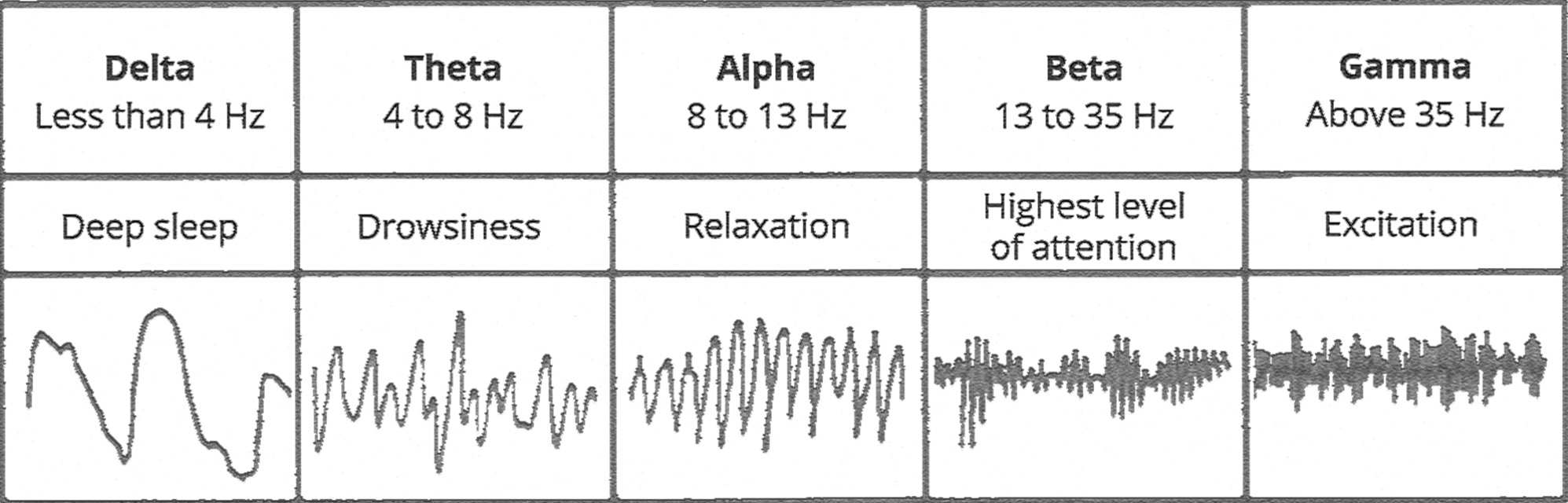

Starting with biofeedback and the waveforms it produces. In the context of art, different brain states, such as Delta waves (associated with deep sleep) to Alpha waves (signaling calmness) or Theta waves (linked to meditation), influence how the body interacts with the world. Art, in turn, can reflect and express these states.

Then we have serendipity. The unpredictability of chance. This is where abstract ideas begin to take form. When I consider epicycles, ancient models of planetary motion come to mind. These also reflect how I visualise the cycles of thought and feedback. In my prototyping, I constantly adjust interventions and parameters, allowing the unexpected to unfold organically, much like natural processes in the “Wood Wide Web,” where trees and fungi communicate underground.

I am also considering the relationship between the self and external systems. Through concepts like out-of-body experiences and dreams, I examine how our consciousness interfaces with machines. My interest in neurological diagnostics and therapy shapes this investigation on how technology can be used to monitor and influence states of consciousness, or treat conditions such as epilepsy or sleep disorders. The idea of free will within these systems becomes intertwined with questions of authorship and creative agency. Where does human agency end, and where does the machine’s synthetic agency begin? In biofeedback systems, this line becomes increasingly blurred.

Another essential layer involves interactive installations, where participants' biofeedback, like their heart rate or muscle activity, drives the experience. I’m particularly drawn to the concept of multi-user phenomenology, where collective interaction with these systems (via HCI or other interfaces) transforms the artwork. This lack of intentional control raises interesting questions about how we judge agency and how we perceive confirmation when interacting with something beyond our direct control.

As I mentioned in week 0, I’m also intrigued by posthumanism and how we reconcile the human ego with the machine ego. The machine becomes an extension of the self, a reflection of our consciousness, much like Merleau-Ponty’s theories on embodiment suggest. Feedback mechanisms play a crucial role here. Whether in the brain's regulation of temperature or the way a machine adjusts its behavior based on sensory inputs. As machines begin to simulate human processes, they start creating their own self-models, further complicating the boundaries between human and machine.

My current focus is on physical prototyping, where I’ve been experimenting with a Brachiograph. This project symbolically represents human limbs, addressing the intersection of mechanical and biological systems. I’m interested in how human movement, often involuntary and shaped by unconscious feedback loops, can be mirrored in machines. Tools like Arduino and Raspberry Pi allow me to explore these interactions through physical computing. I’ve found spectrograms to be a fitting visual metaphor for these layers of feedback, mapping unseen data like EEG brain waves into something visible and interpretable.

Exercise Review

After sharing our mind maps, we did an exercise where we walked around, placing stickers on each other's maps to highlight what we thought was the most interesting direction. This exercise helped me see how my ideas might appear to others, especially when the connections I see aren't as obvious to an outsider. I realised that while the exercise was helpful, it might have been more effective if I had clearly written out my ideas and organised them, rather than just listing keywords in a brain dump.

I was surprised to find that most of the stickers were clustered around topics that were interesting as an isolated keyword but weren’t directly related to my main theme. Because of the messy layout, I think the participants hadn’t entirely followed my overall reasoning throughout the mind map. They also weren’t familiar with the framework from my readings that justified my focus on involuntary control as a way to explore creative agency. It made me realise that if I continue down this path of exploring involuntary control, I will need to provide a stronger, more intuitive justification that is easily understandable to readers that come across my topic for the first time.

Interestingly, the topic of machine ego, which received many votes, was not my current focus. It made sense that it was popular since it’s the concept that initially sparked my interest in this discourse (refer to week 0). However, my shift towards biofeedback and involuntary control, rather than the ego of the machine itself, was a deliberate decision. While machine ego is an exciting area, it’s extremely broad and closely tied to the entire concept of AI, which I feel could be overly ambitious and less specific than what I want to explore. By focusing on biofeedback, I can dive deeper into a more refined topic.

For now, I’m committed to the idea of involuntary control, at least until next week, when we will be presenting concept maps that draw clearer connections between our themes and ideas. Hopefully, this will give me an opportunity to better articulate the justification for my approach and framework.

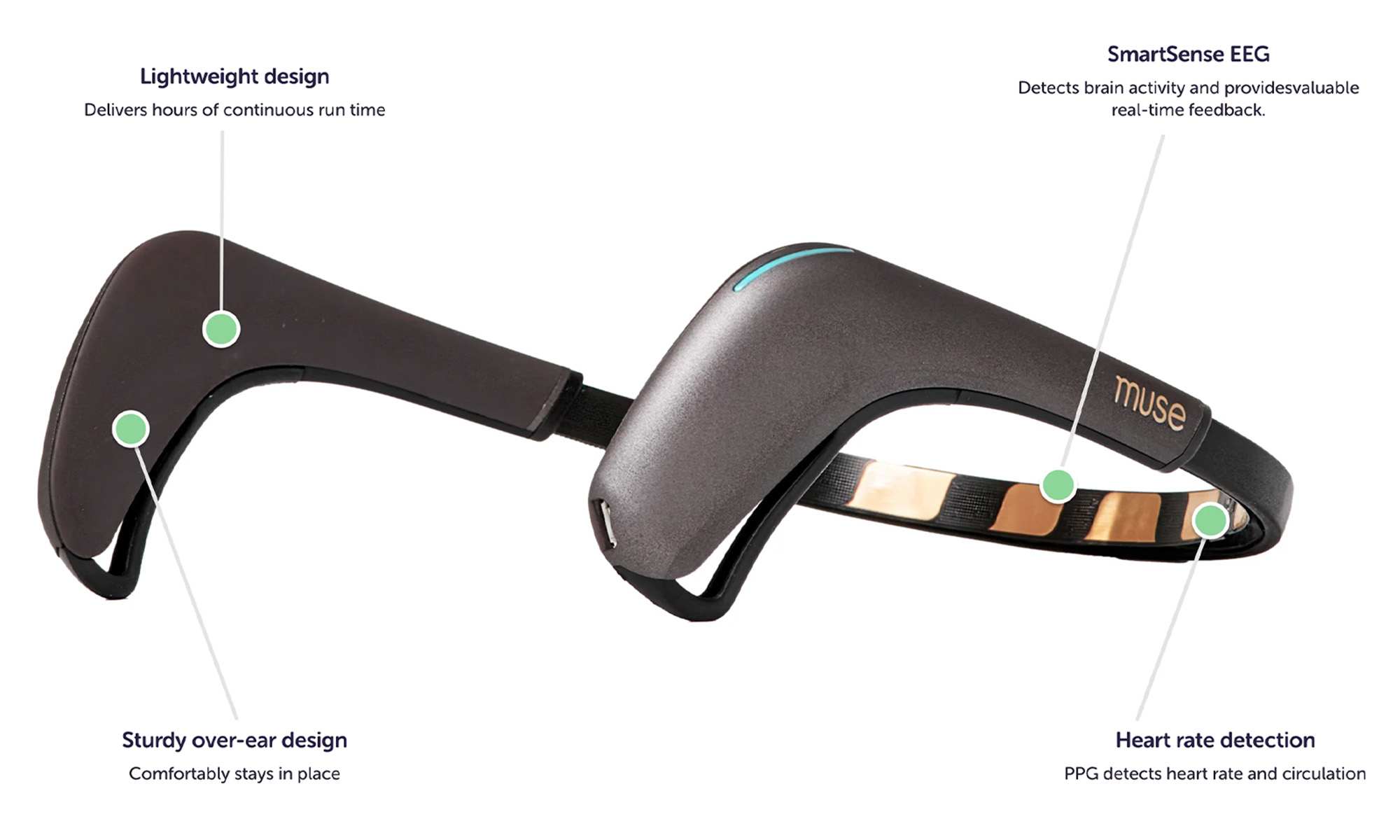

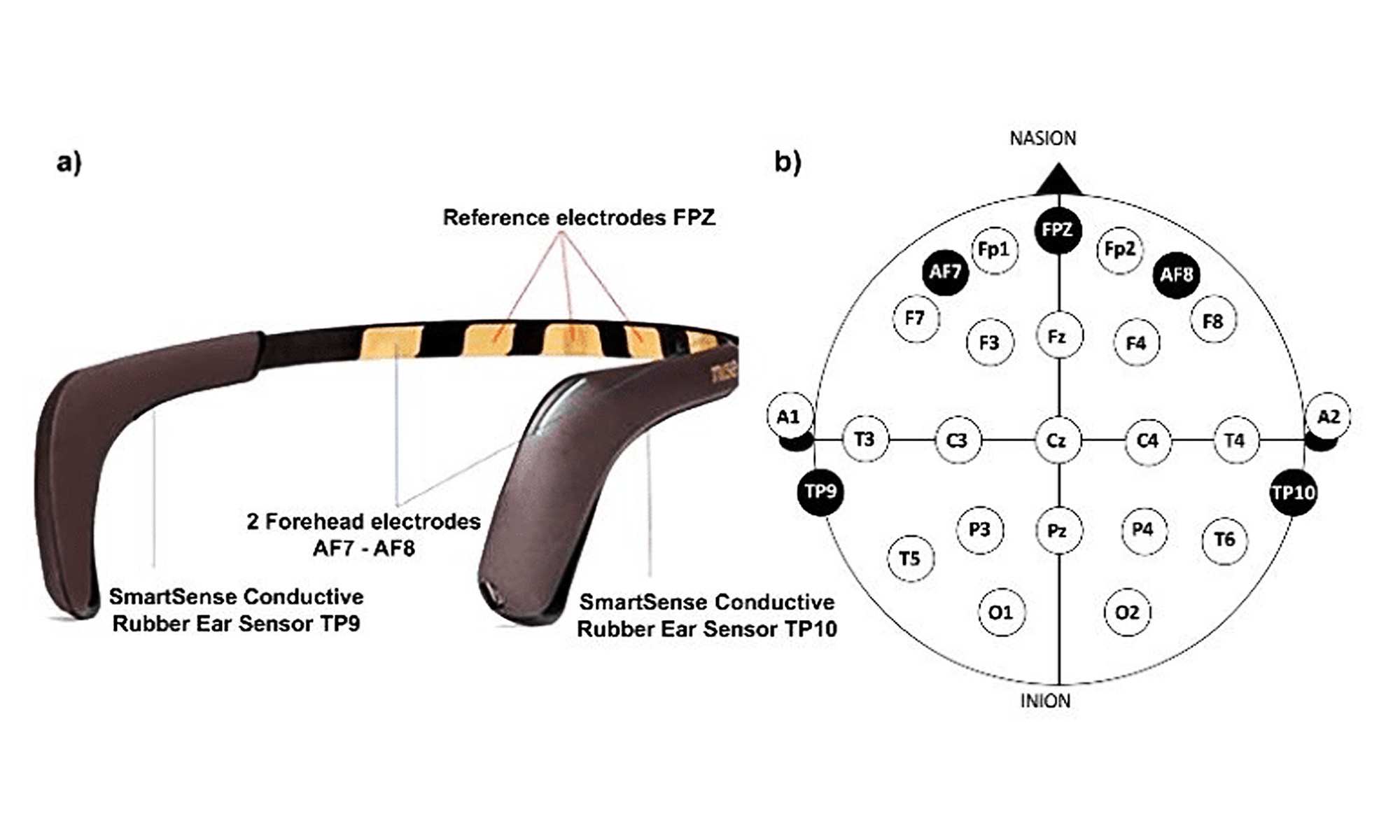

The MUSE Headset

The Muse headband is a consumer-grade EEG device primarily advertised as a tool for meditation, which can send real-time neurofeedback by measuring brain activity such as delta, theta, alpha, beta, and gamma waves, alongside raw brainwave data, accelerometer, and gyroscope motion. It is equipped with four electrodes (TP9, AF7, AF8, TP10), and can track brain waves at a 256 Hz sample rate and 12-bit sample depth, supporting real-time monitoring of neuronal activity.

It also includes indicators for physical markers like blinks or jaw clenches, and can be a detailed tool for monitoring brain activity in various states of consciousness. Its portability and ease of use make it a suitable tool for real-world testing outside traditional lab settings. But we do need to keep in mind that its data quality won’t be as accurate as medical-grade EEG systems, and can be affected by environmental noise and irregular movement.

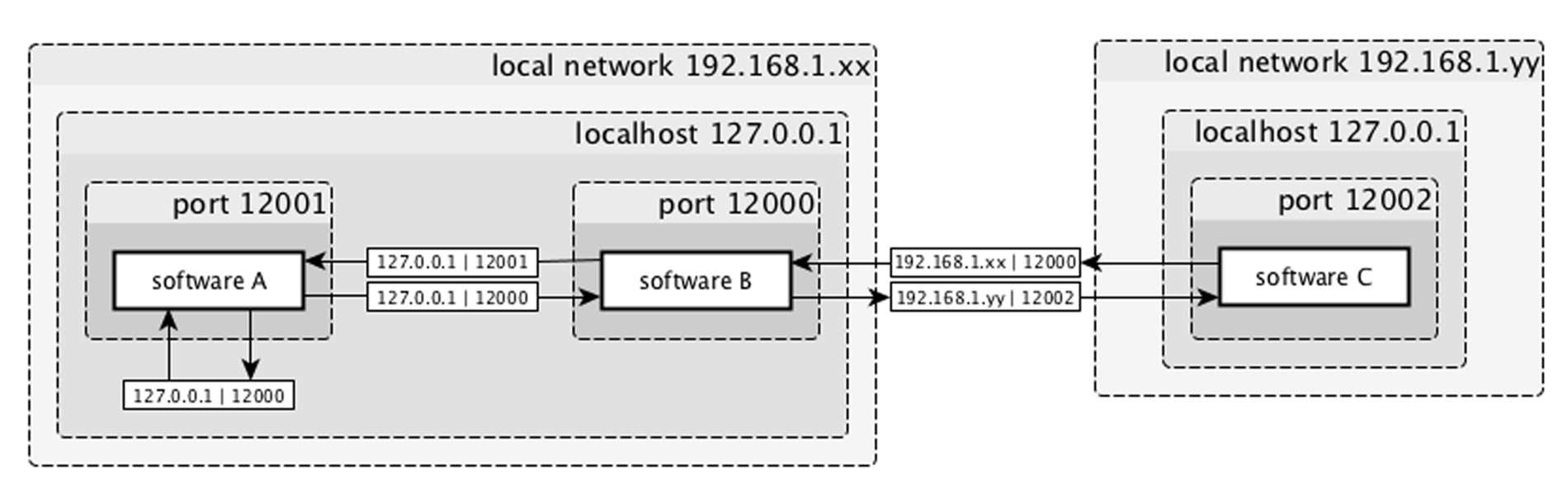

MindMonitor

I am using the MindMonitor app to send OSC output over network connection. It can assist the MUSE to record various brainwave data, including Power Spectral Density, Fast Fourier Transform frequency breakdown, and raw electrical signals in microvolts. To configure the Mind Monitor app, you can open the Mind Monitor app and go to the OSC settings. Set the OSC stream target IP to match your computer’s IP address. Ensure both the Muse 2 and your computer are on the same Wi-Fi network. Select a port (e.g., 7002) for the OSC stream. If you encounter connection issues, try different ports until stable.

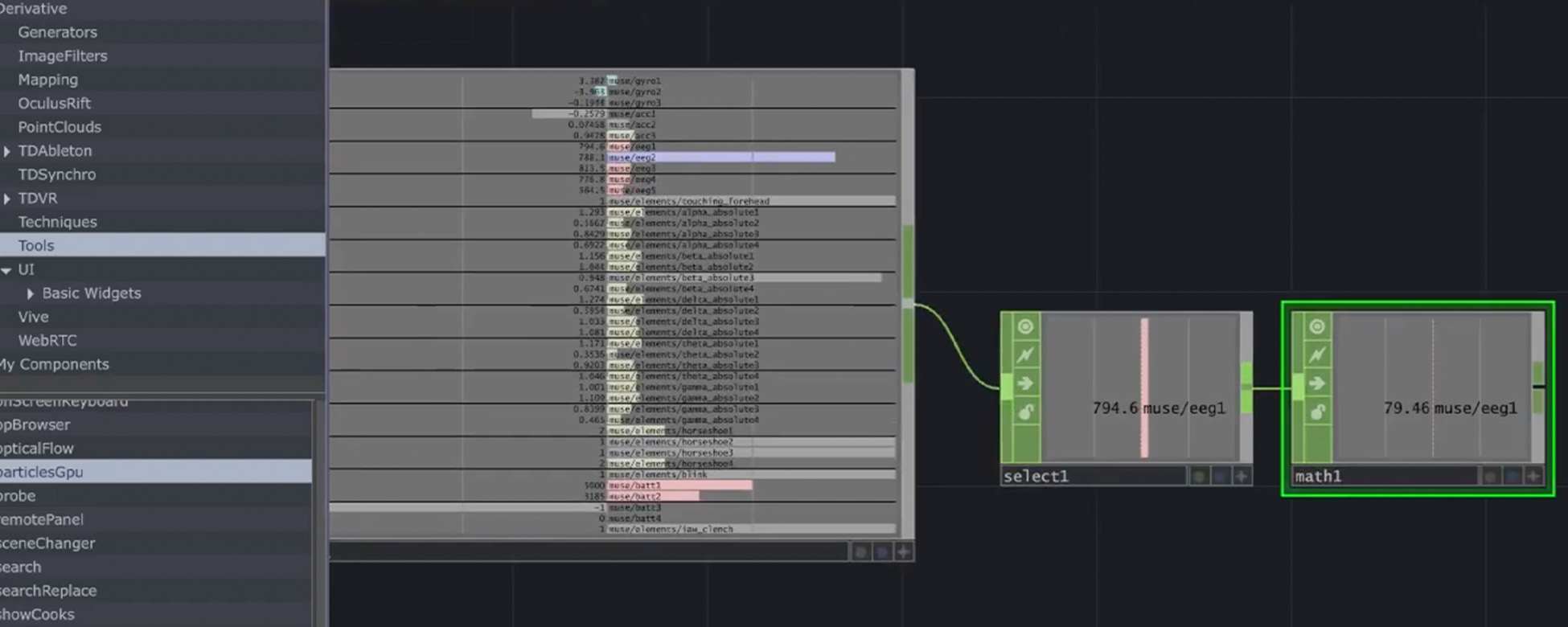

Connecting to TouchDesigner

Before connecting my Muse 2 EEG sensor to the BrachioGraph, I tested it with TouchDesigner, which comes with an OSC receiver node. To begin, I configured the Mind Monitor app by setting the OSC stream target to match my computer’s IP address and ensuring both the Muse 2 and my computer were connected to the same Wi-Fi network.

Next, I set up TouchDesigner by adding an OSC In DAT node to receive the EEG data from the MUSE. After verifying the incoming data stream, I used a Select CHOP to isolate EEG signals like Delta, Theta, Alpha, Beta, and Gamma waves. I processed this data by converting it to absolute values using an Abs CHOP and labeled the network with a Null CHOP for better organisation.

Processing

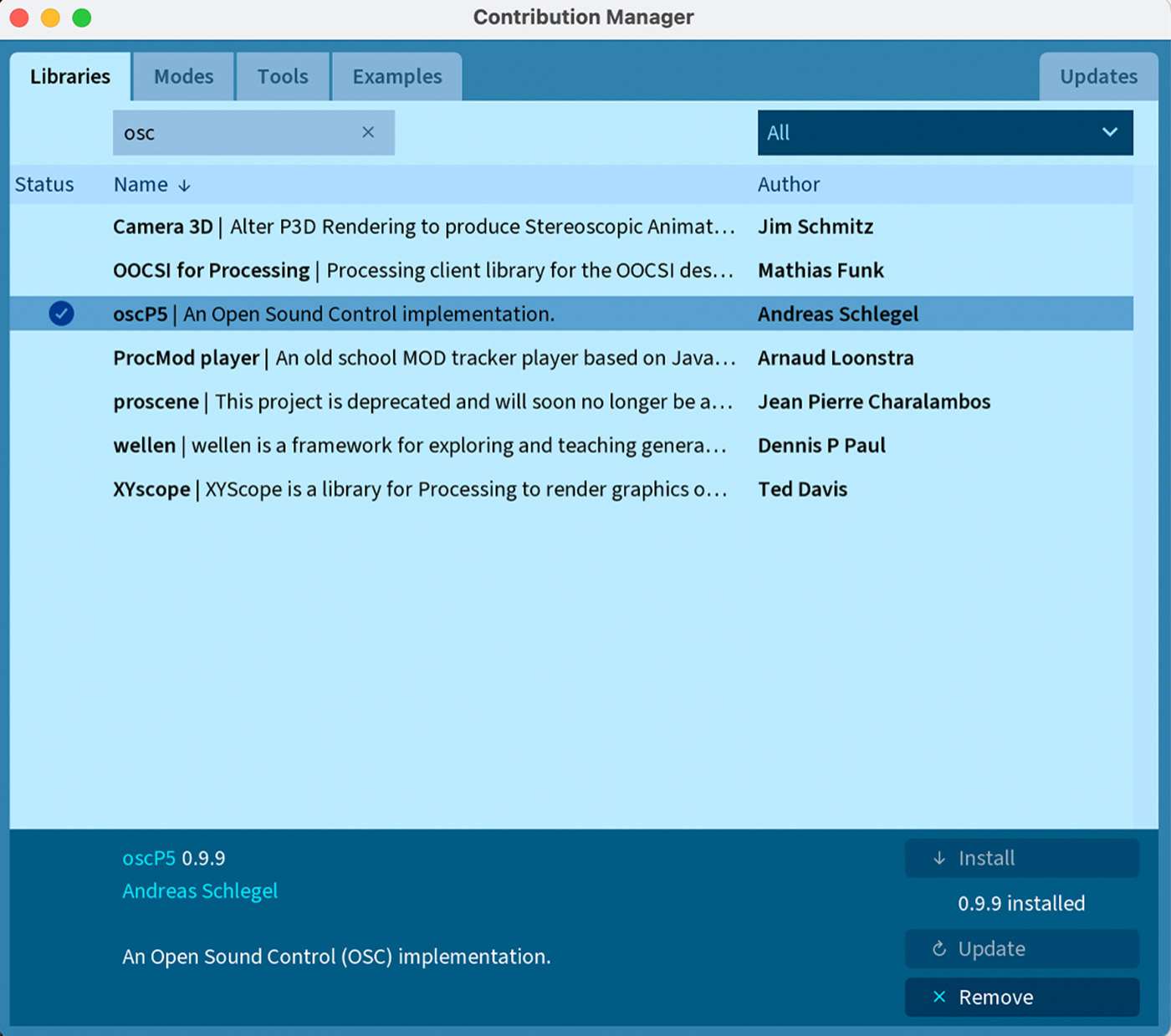

I installed the oscp5 library to send and receive messages over the Open Sound Control protocol. OSC is a flexible, high-precision protocol often used in multimedia applications for network communication between computers, devices, and applications, particularly in music, visuals, and real-time control scenarios.

Like I mentioned in week 2, this library is useful in interactive projects where real-time data needs to be transferred between devices, like in my case, where I am using an EEG device to affect Processing visuals. I thought of asking Andreas, my supervisor/the creator of the oscp5(!) for a walkthrough, but his website explained the process very clearly, and I managed to connect it directly to Processing.

EEG

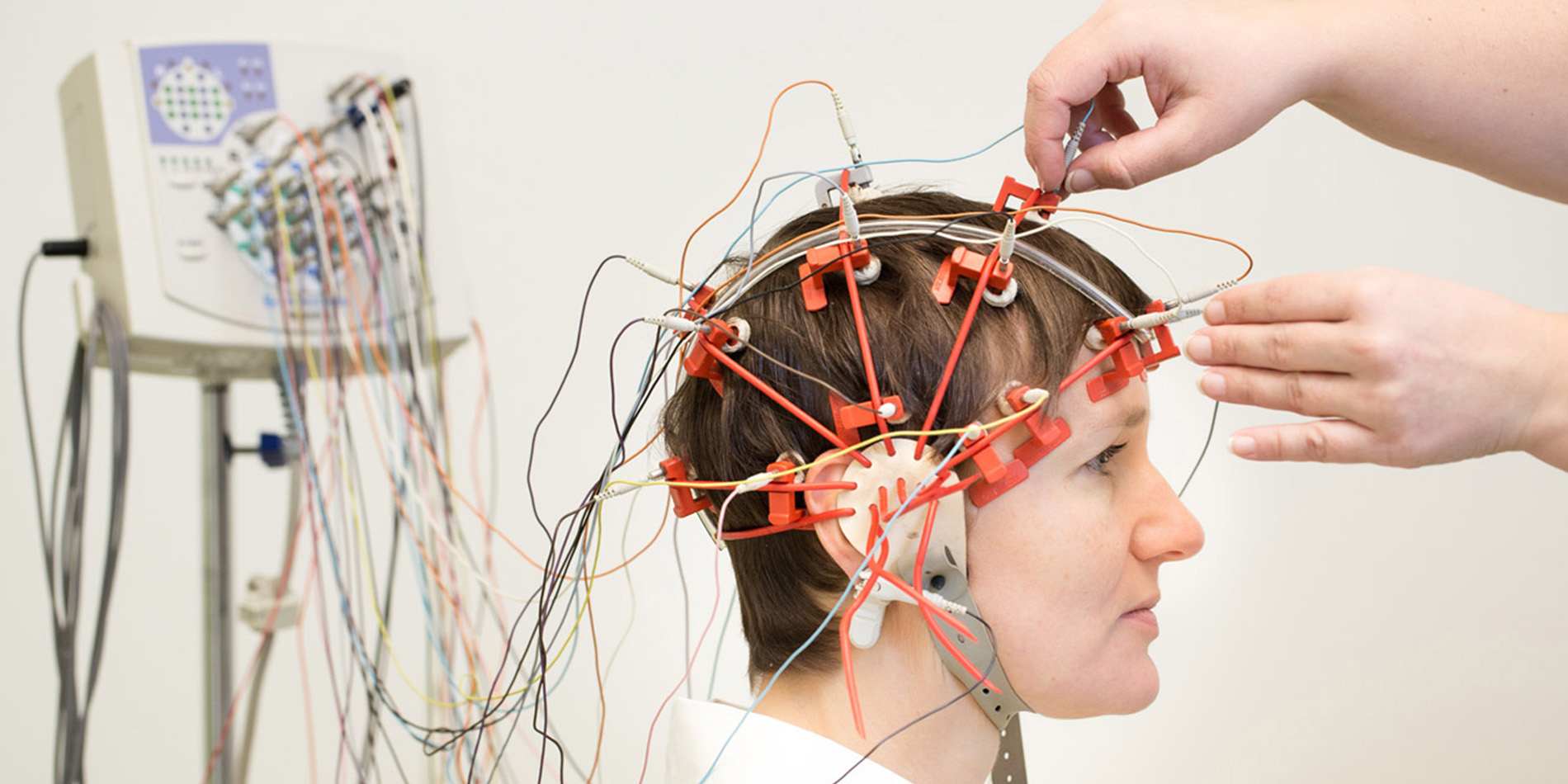

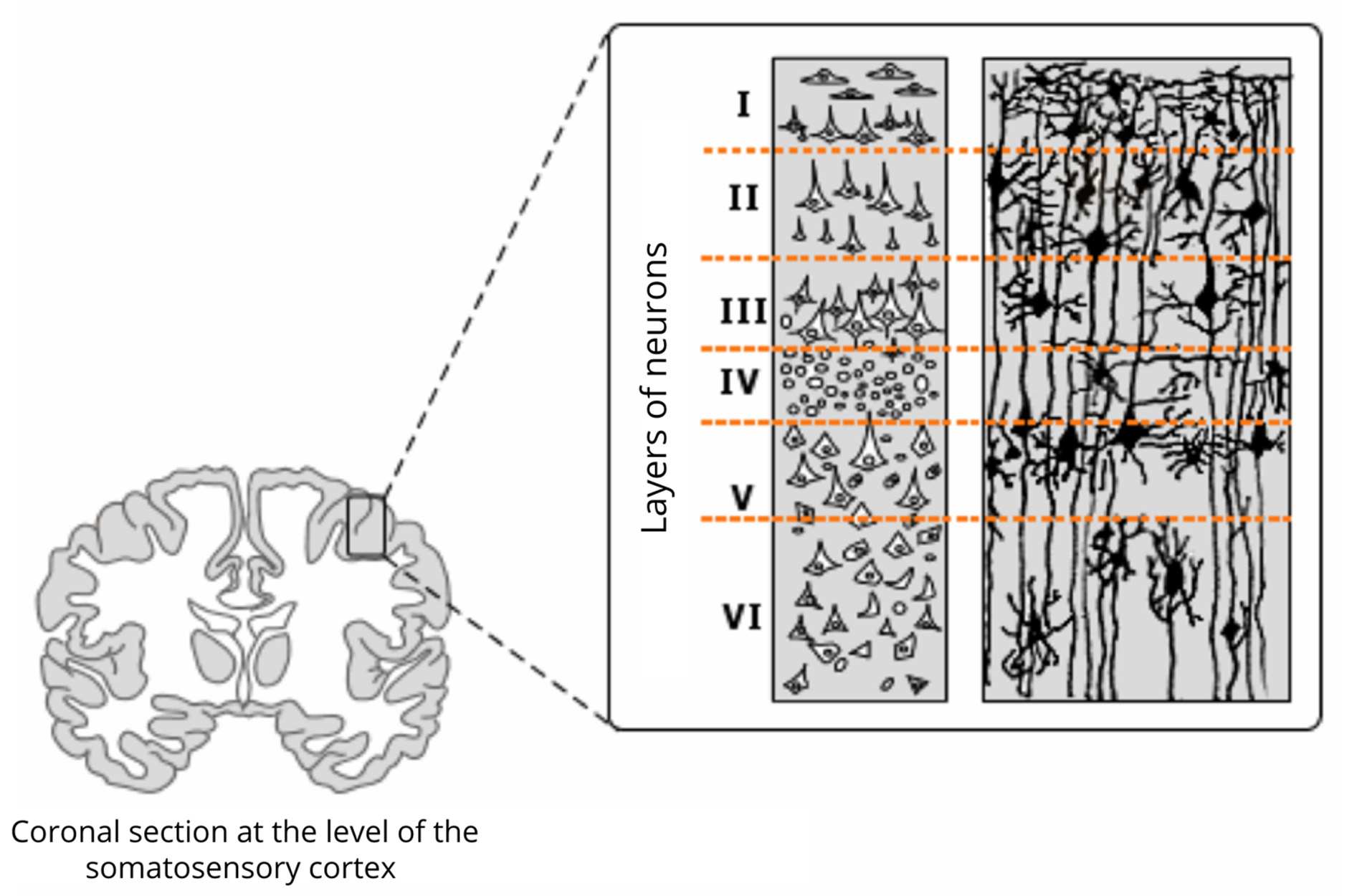

A very basic, summarised introduction to EEG. Electroencephalography is a method for recording electrical activity of the brain through electrodes placed on the scalp. EEG detects the brain's electrical signals, or brainwaves, which are generated by neurons firing within the brain. These signals are used to study brain function in medical, research, and artistic contexts.

Small electrodes are placed on the scalp in a standard pattern known as the 10-20 system. These electrodes detect the electrical activity produced by neurons firing in the brain, and they send these signals to an amplifier and then to a recording device.

Brainwaves

- Delta waves (0.5 – 4 Hz): Deep sleep.

- Theta waves (4 – 8 Hz): Relaxation, drowsiness, or meditation.

- Alpha waves (8 – 13 Hz): States of rest.

- Beta waves (13 – 30 Hz): Active thinking, focus, or anxiety.

- Gamma waves (>30 Hz): Problem-solving.

The brain operates through 'electrical impulses'. These impulses create patterns known as brainwaves,

which can be classified into several types based on their frequency:

Applications

- Medical diagnostics for conditions like epilepsy, sleep disorders, and brain death, etc.

- Cognitive neuroscience, to study brain processes such as attention, memory, learning, and emotion.

- Neurofeedback for biofeedback training, where individuals learn to control brainwave activity to improve attention or relaxation.

- Brain-computer interfaces (BCIs), with possible applications in assistive technology for people with disabilities.

OSC

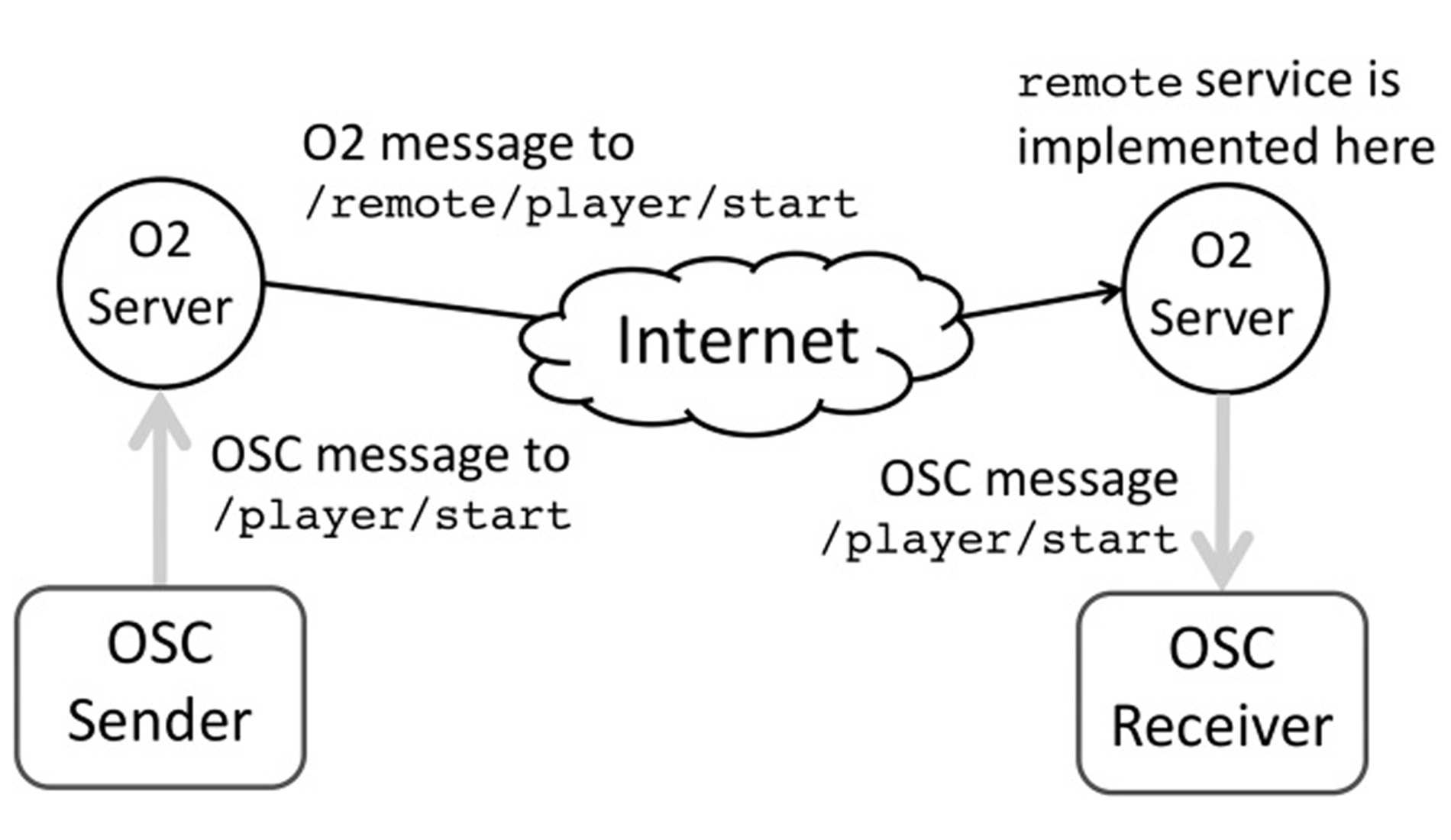

Open Sound Control (OSC) is a protocol used for communication between multimedia devices, computers, and applications. It is primarily designed for real-time performance and interaction, often used in music, visual art, and other interactive multimedia applications. OSC allows for high-speed, high-precision data transmission over networks (e.g., local networks, internet) and is more flexible than older protocols such as MIDI.

Message-Based Protocol

OSC is based on a message structure, where each message consists of an address pattern and one or more arguments. The address pattern resembles a URL or file path, allowing messages to be routed to specific parts of the system (e.g., /synth/frequency or /visuals/color). Arguments can carry different data types, such as integers, floats, strings, and even complex structures like arrays.

High Precision

OSC is often used in environments where precise control and synchronization are required, such as music performances, interactive installations, or live visuals. It supports a wide range of data types and can handle high-resolution data, making it more suitable for these scenarios than older protocols like MIDI, which is limited to 7-bit values.

Network Communication

OSC messages can be sent over local networks (e.g., via UDP or TCP) or the internet, allowing remote communication between devices and applications. This feature makes it highly adaptable to various setups, enabling, for example, remote control of synthesisers, lighting rigs, visual projections, or even robots.

Interoparability

OSC is platform-agnostic and works across different software and hardware systems. This allows for communication between various programs (e.g., Ableton Live, Max/MSP, TouchDesigner, Processing) and devices (e.g., synthesisers, Arduino-based systems, interactive installations).