10

12 Tone Surrealism:

EEG to Audio Visualisation

14.10.2024 ~ 20.10.2024

Automatic Music?

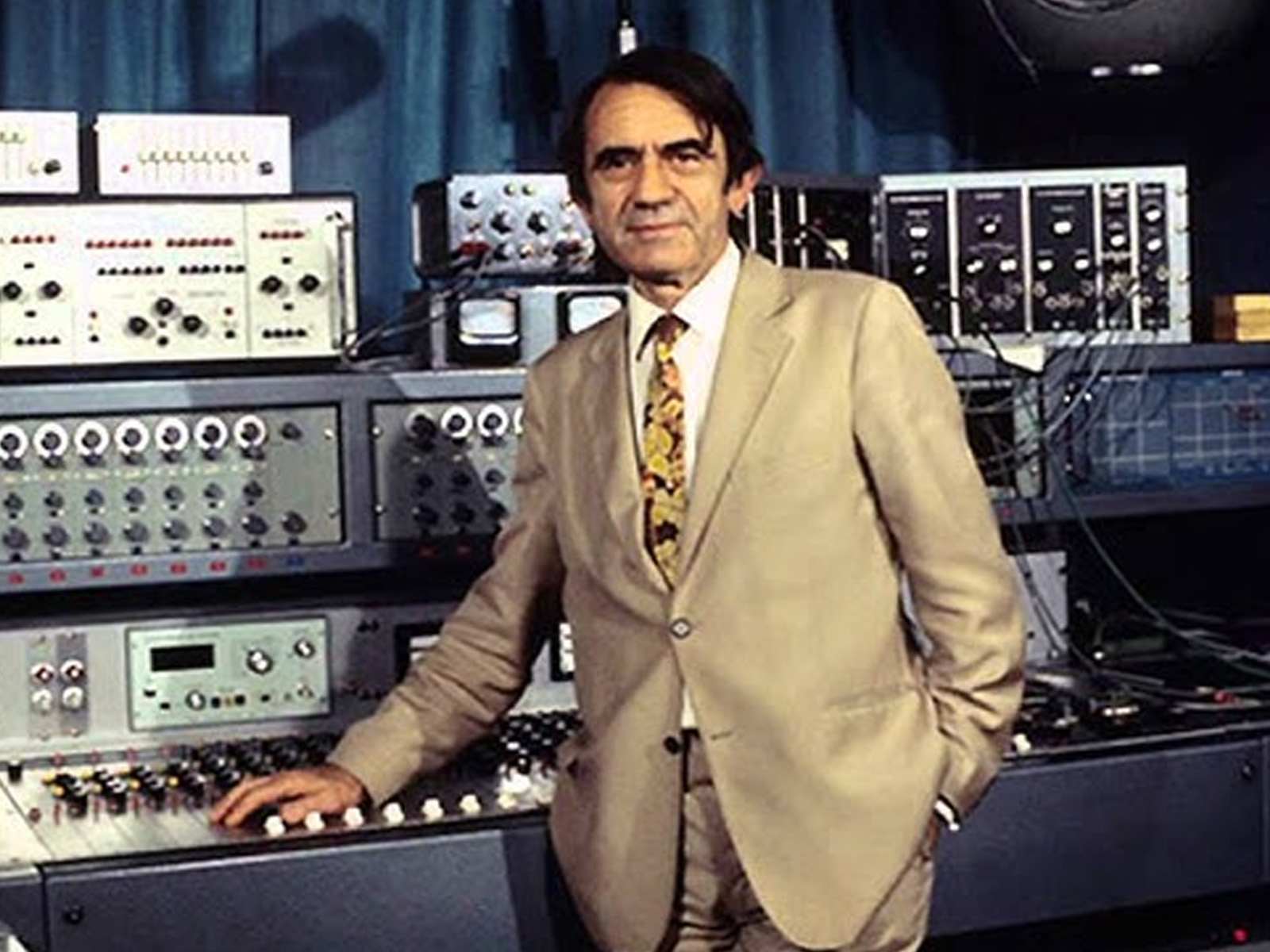

Although I couldn't find a definite technigue for surrealism in music, there are some works that some define as surrelist music. These works often defy traditional structures, embraces spontaneity and dissonance. Some examples I could find include John Cage’s chance operations and Pierre Schaeffer’s musique concrète, which use ambient recordings and sound collage to blur the lines between music and everyday noise.

Schaeffer's Musique concrète, used environmental recordings, such as voices, machinery, and natural sounds instead of instruments. These sounds are spliced, reversed, or manipulated, creating compositions that emphasise texture and tone over melody, breaking the boundary between music and noise. While in the 1940's, it used to rely on reel-to-reel tape splicing, modern techniques typically involve digital softwares.

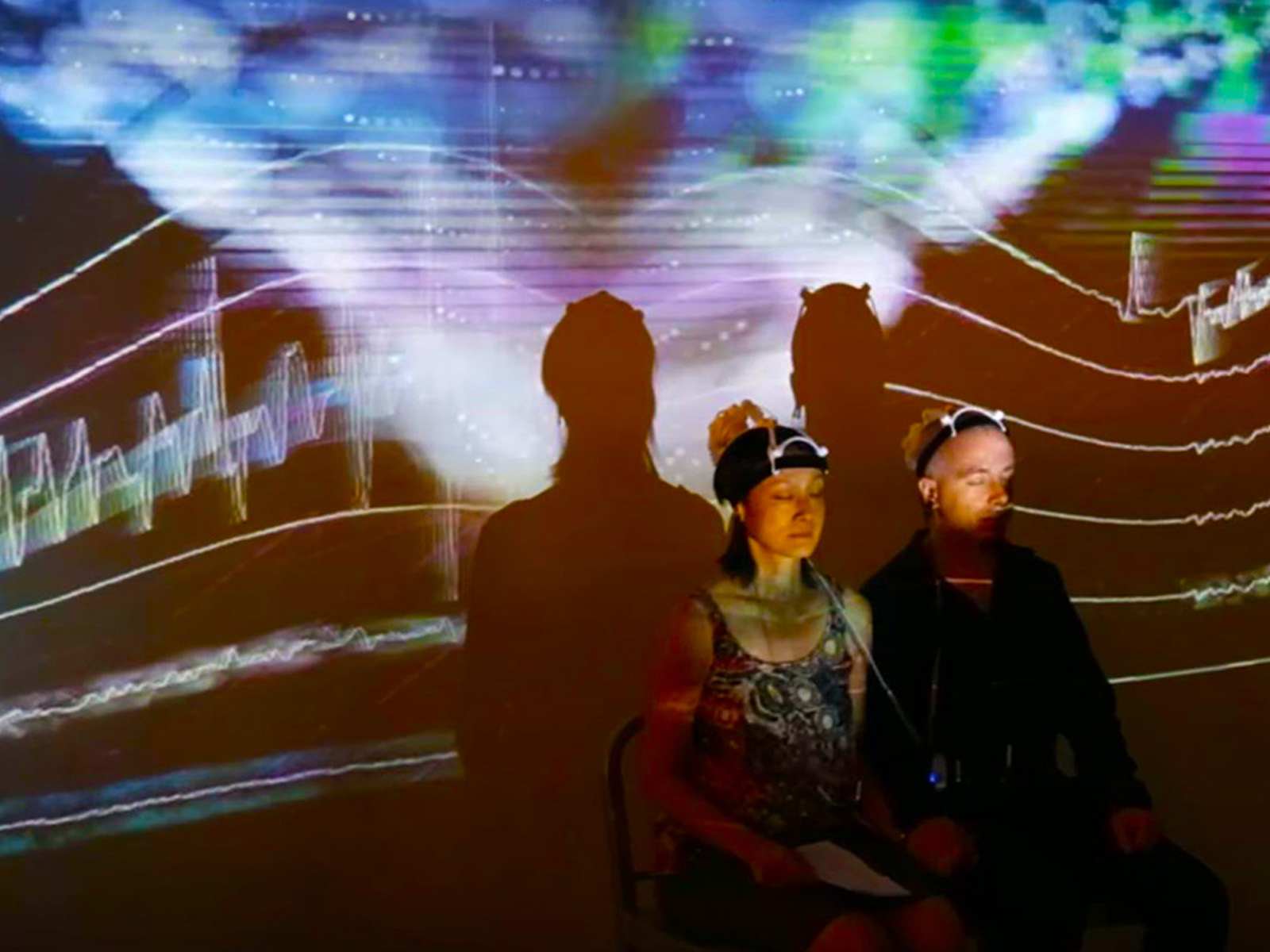

EEG to Music

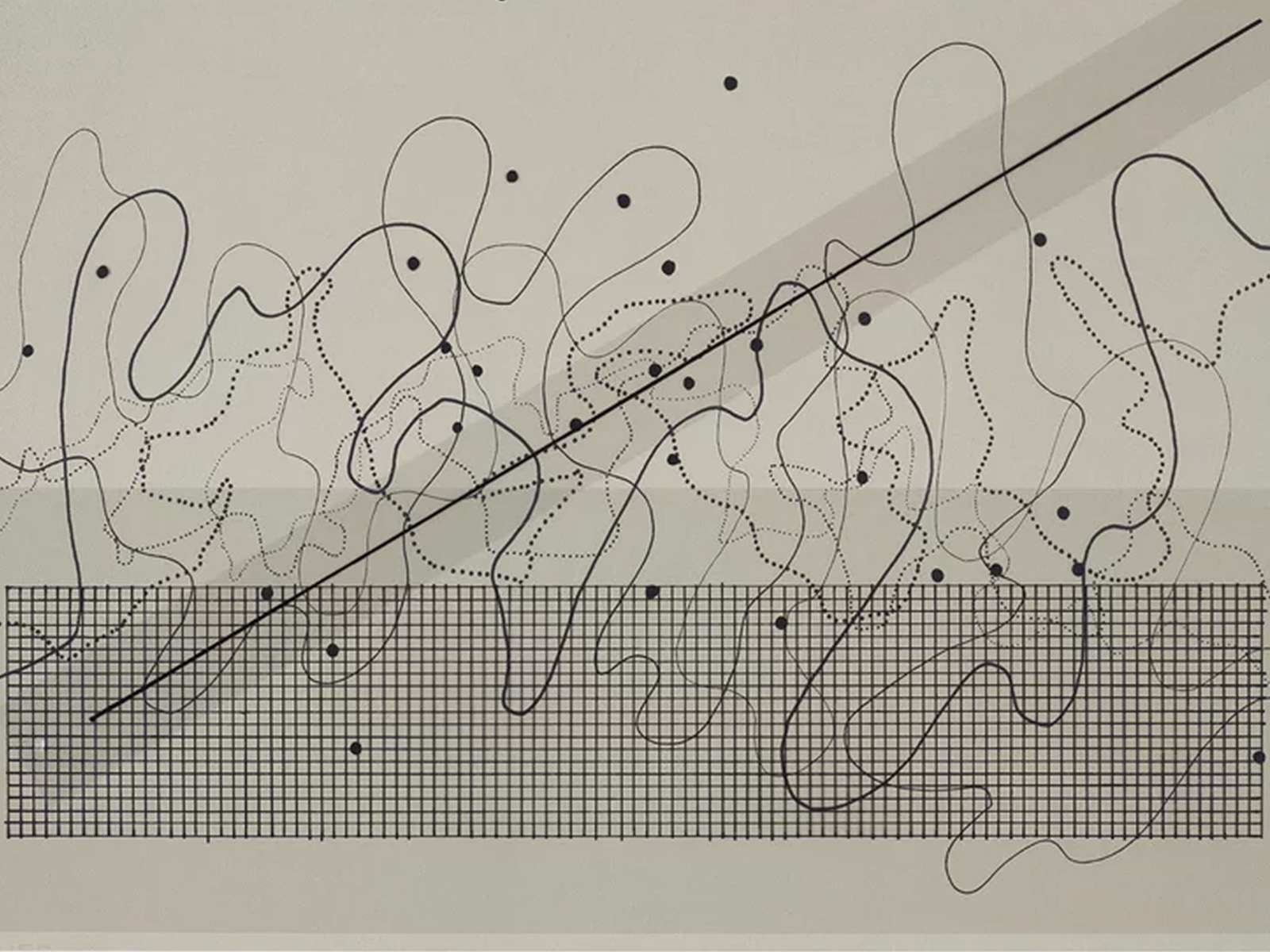

Making music from brain activity has been increasingly experimented with in the recent decade. But it can be fascinating to learn that it has been around since the mid-20th century. These case studies show how EEG data can be translated to music.

Another instance is the Encephalophone by neurologist and musician Thomas Deuel. This is a brain-controlled musical instrument designed for those with limited mobility. It maps brainwave patterns like alpha waves to a synthesizer, so users can generate sound through focusing.

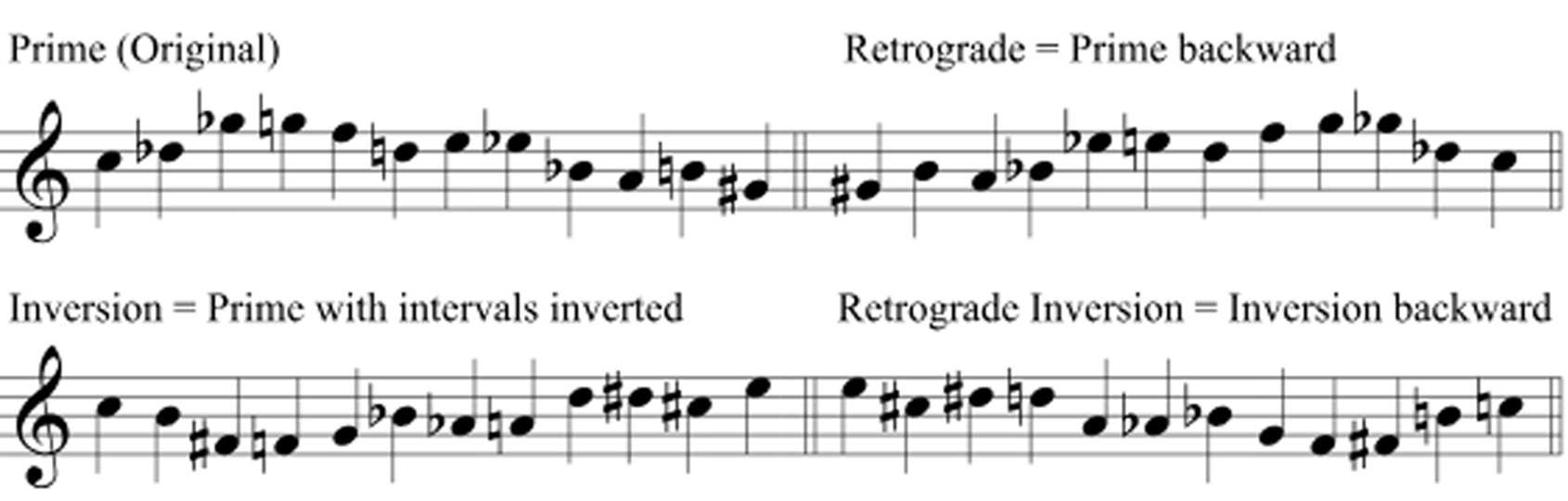

12 Tone Surrealism

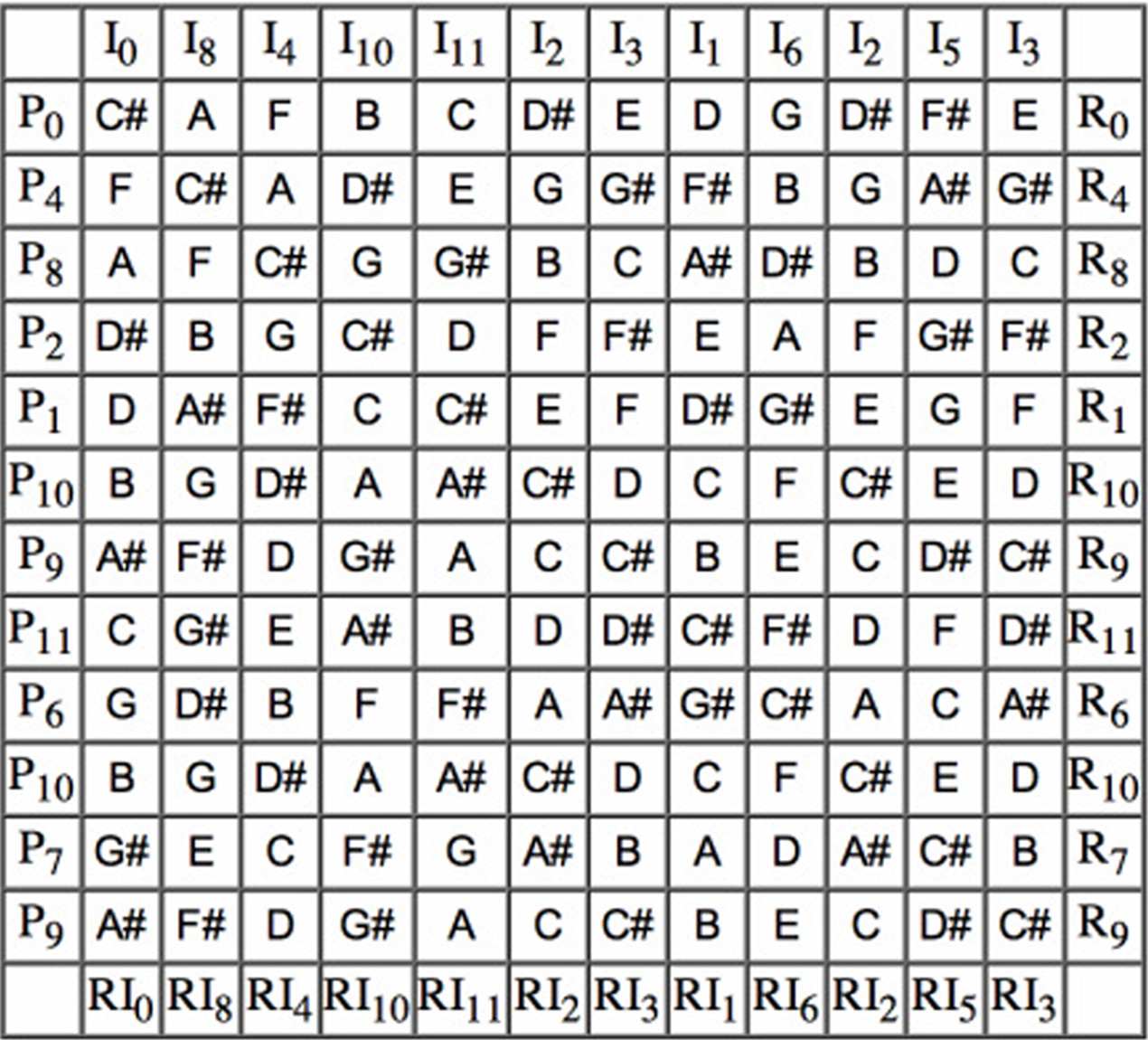

Moving on to my own experiments. The title is derived from 12 tone serialism. 12-tone serialism, developed by Arnold Schoenberg, is a compositional method that treats each of the 12 notes in the chromatic scale equally, moving beyond the traditional Western focus on a central key or "home" note.

The main sequence is the tone row, which uses each note only once before repeating, which forms the foundation of the piece. This row can be transformed in several ways—reversed, inverted, or both—to generate musical material while maintaining equal importance for each note.

This technique has often been criticized for "reducing" music to algorithms, but I see an opportunity to reverse this concern, by using it to turn data into music. When I listen to 12-tone serialism, its structure feels almost mechanical, as if it were music composed for machines rather than humans.

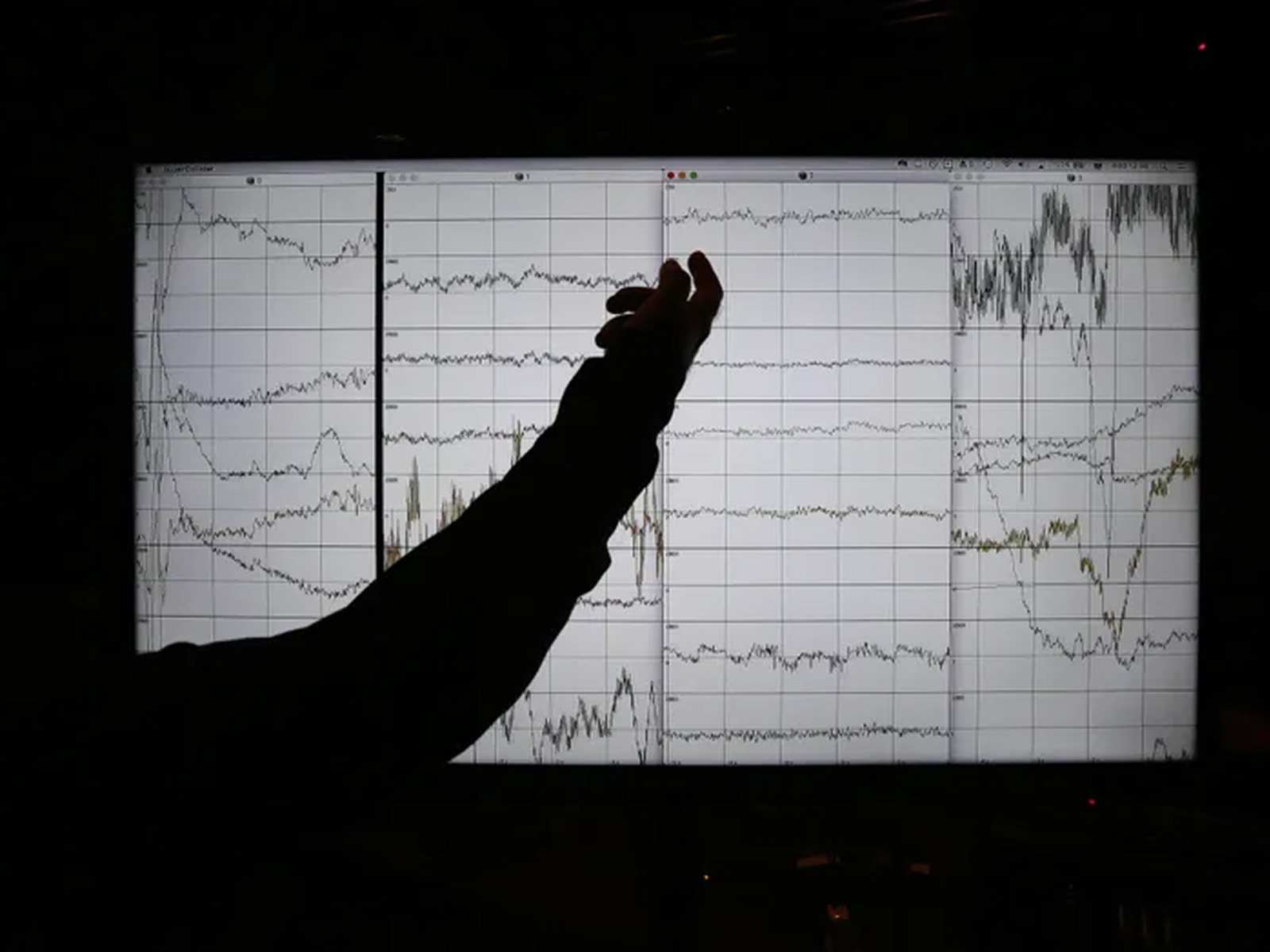

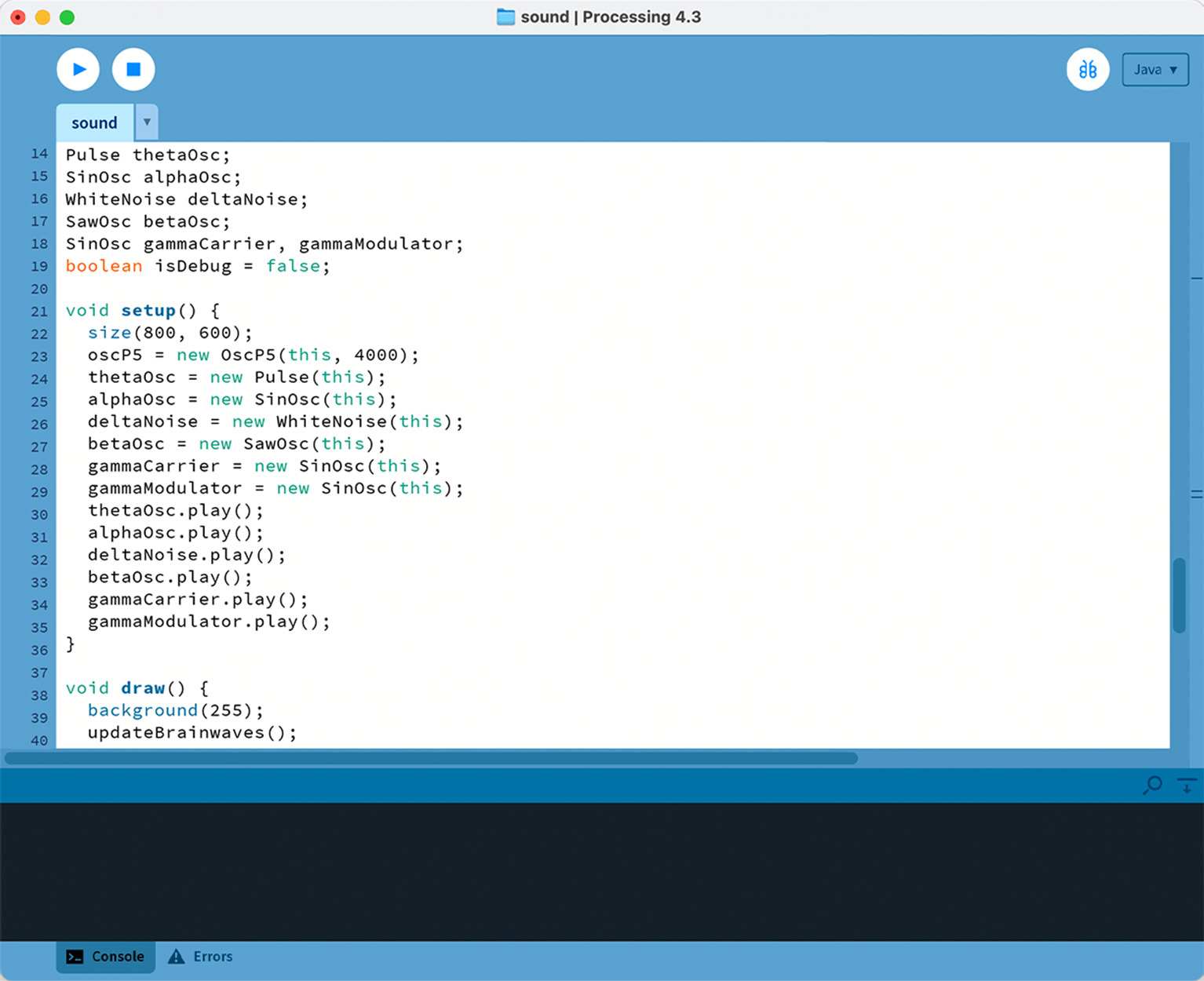

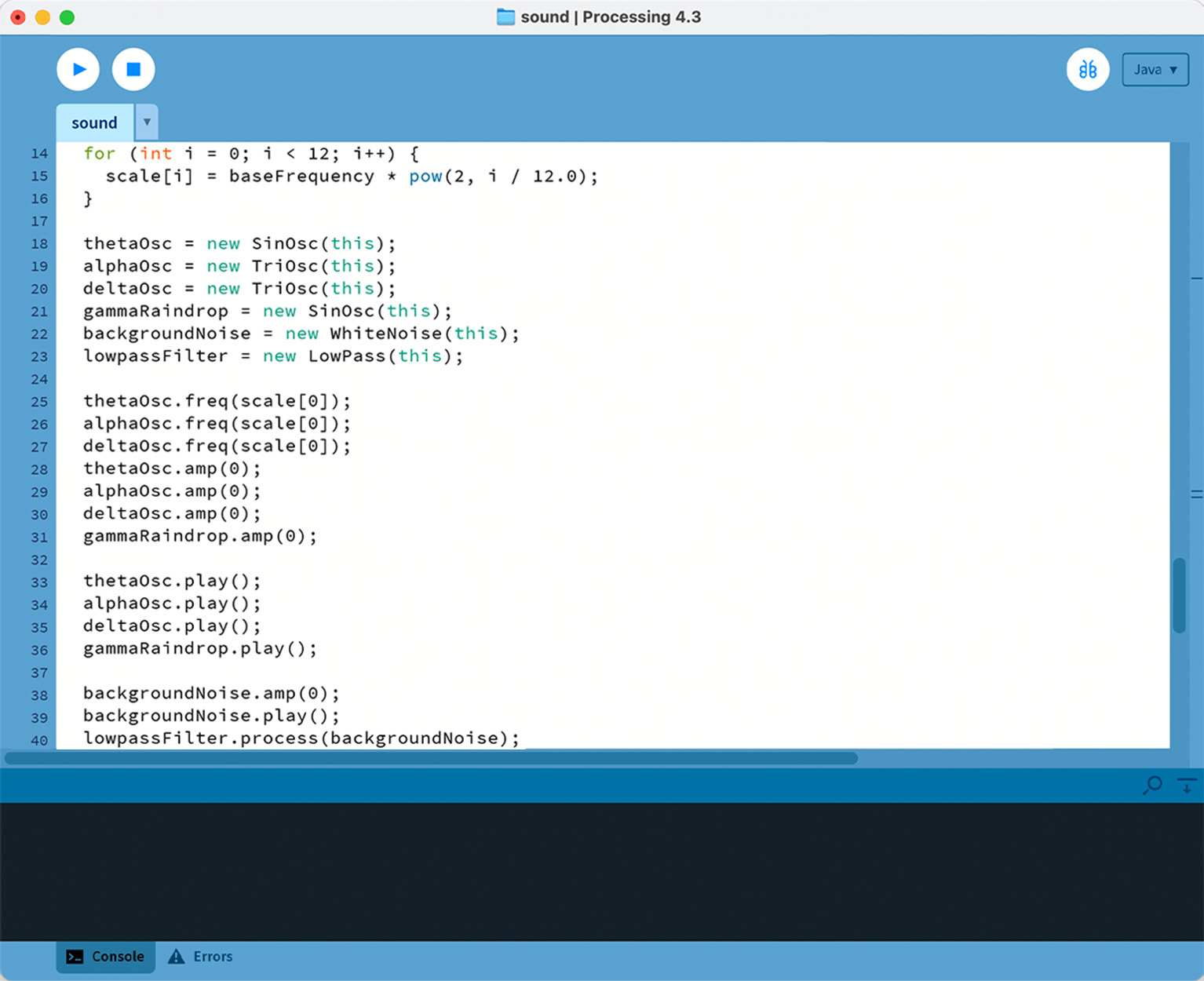

Like previous experiments, I began by setting up a real-time link between brainwave signals from the Muse headset and sound generation in processing. I set up the code by using the usual OSC receiver in the OSCP5 library to handle all five brainwave band (theta, alpha, delta, beta, and gamma) into arrays.

In the first version, each band was mapped to a specific waveform (simple oscillators like Pulse or SinOsc) with frequency and amplitude directly tied to the band’s average value. For example, the alpha wave sets the frequency of a Sin oscillator within a fixed range, while the delta wave controls the volume of White Noise.

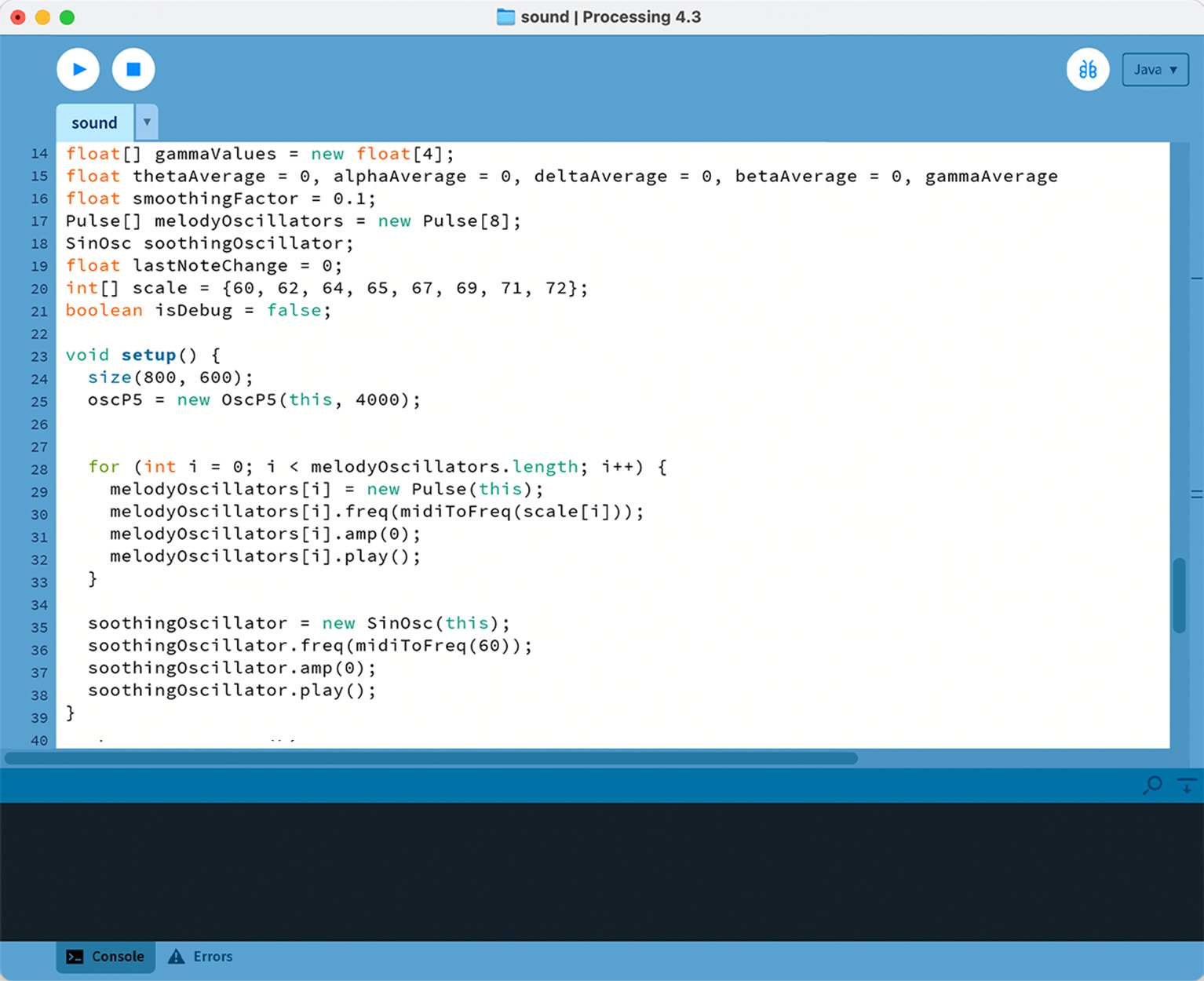

In my second attempt, I tried to introduce a melody by adding a C Major scale and associating specific brainwave bands with musical elements. For example, theta was mapped to the speed of note changes, affecting the "rhythm".

Alpha would control the melody by selecting notes from the scale. I also tried to generate a soothing background Sin Oscillator which responded to the gamma and beta bands, adding an underlying layer, almost like a bass.

The third attempt was similar to the second attempt, where I had each brainwave band assume a distinct role that controls just two separate "tunes". For the first tune, theta controls rhythm, alpha continues to drive melody, and delta is assigned to harmony.

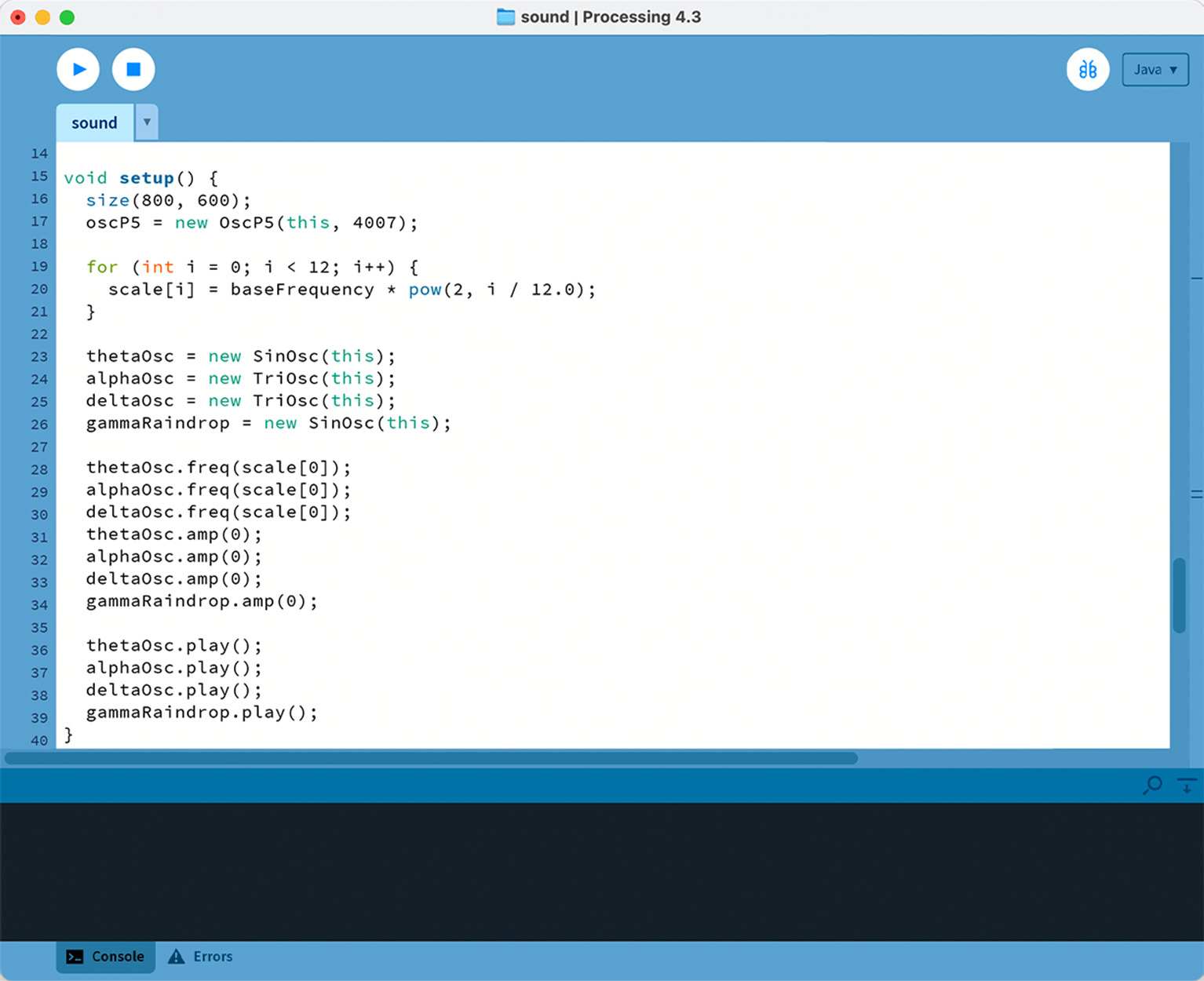

The change was that this time, I changed the C major scales into 12 tones that matched the 12 tone serialism narrative. Like the second attempt, I also tried to generate a soothing background Sin Oscillator which responded to the gamma and beta band.

In the fourth attempt, I included a timbre to match each brainwave. For instance, theta was matched with a flute-like sound using the SinOsc, while alpha generated a bell-like sound using TriOsc.

Delta created a softer harmony through a slightly detuned TriOsc, and the gamma band was mapped to a "raindrop" sound effect, triggered sporadically based on the intensity of the beta wave.

As a finishing touch, I changed the delta waves to control the harmony layer by modulating a low-pass filter applied to white noise. I like the noisy, mechanical sound this creates. More variety that suites the overall atmosphere.

Also, I chnaged the "raindrop" effects to be more of a beeping sound with changing tunnes. The beta and gamma waves controlled this intermittent beeps, with beta managing the timing and gamma altering the pitch.

Visualisation

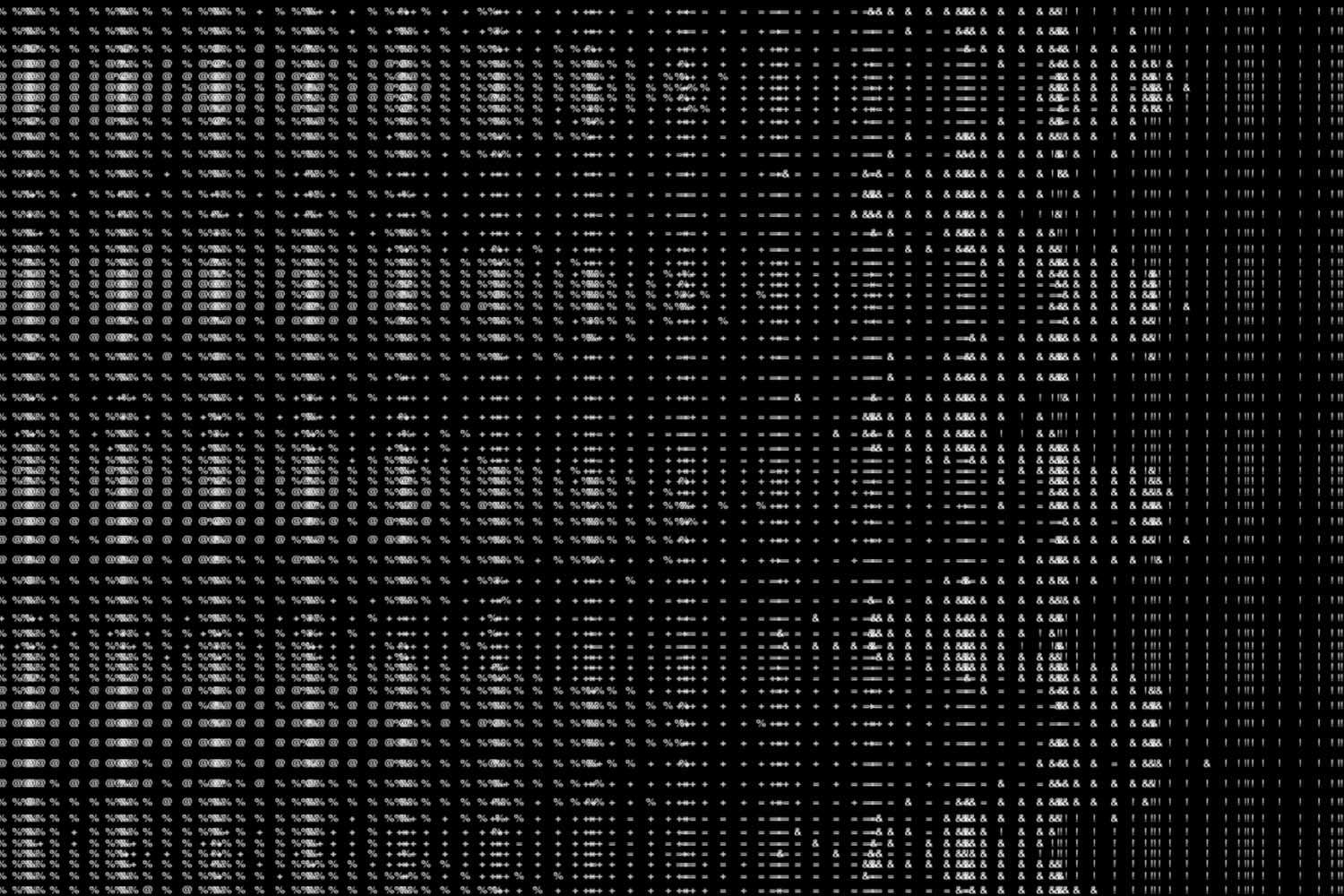

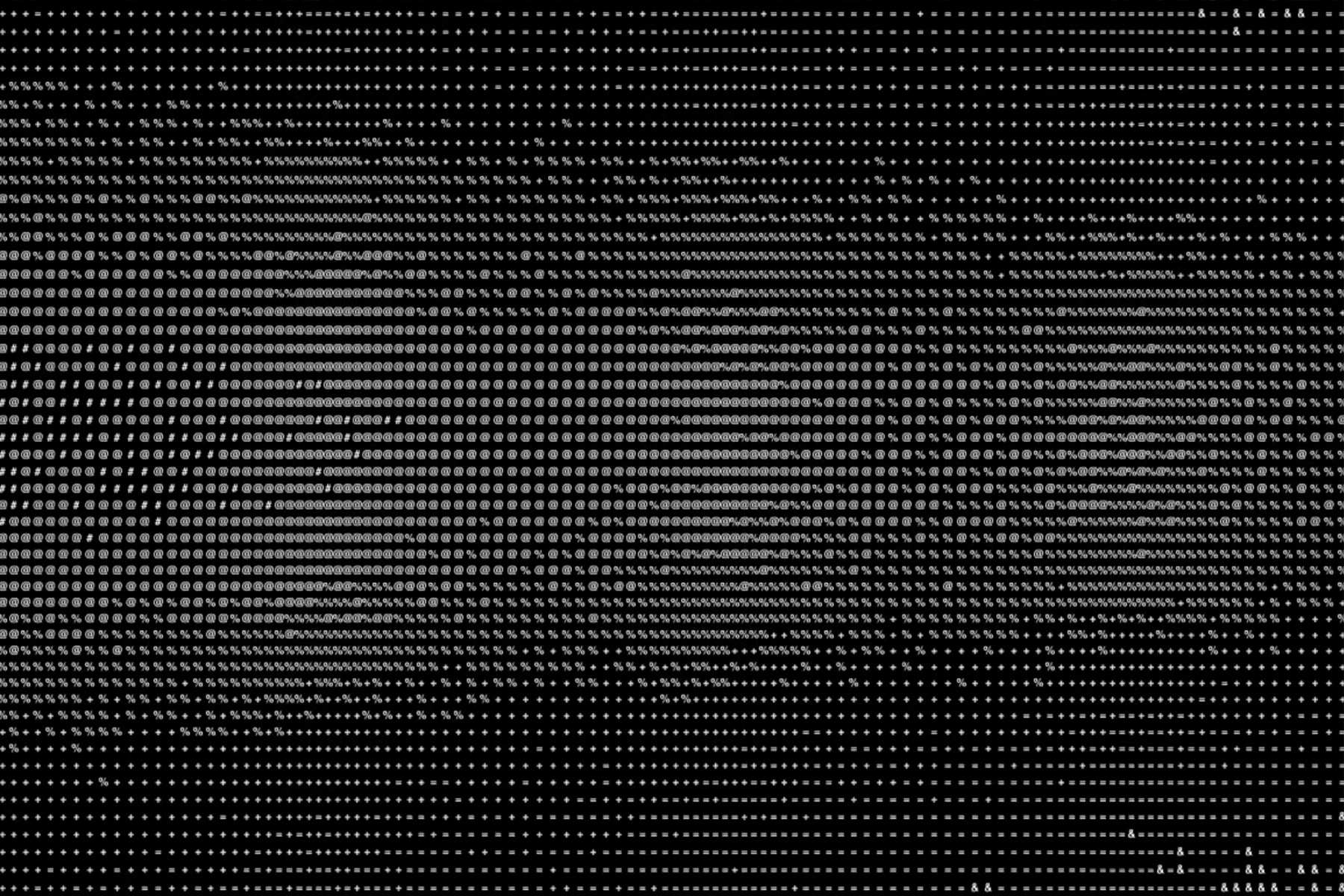

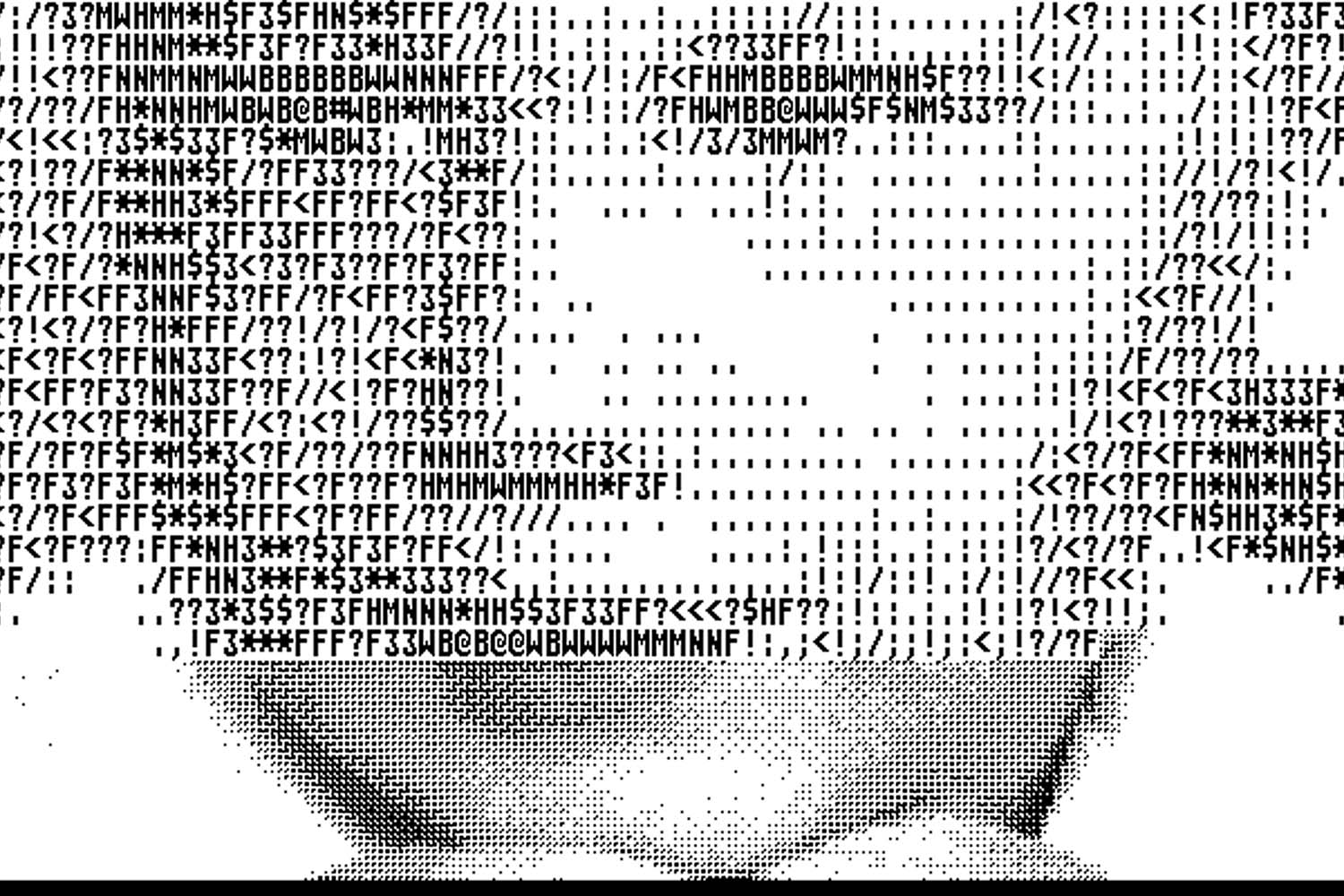

Now, moving on to the visualisation. There wasn’t a defined ideation process for the visuals. The first imagery that came to mind while listening to the sound was something binary, minimalist, geometric. I figured that the best way to approach those keywords would be to refer to ASCII animations for the structural inspiration.

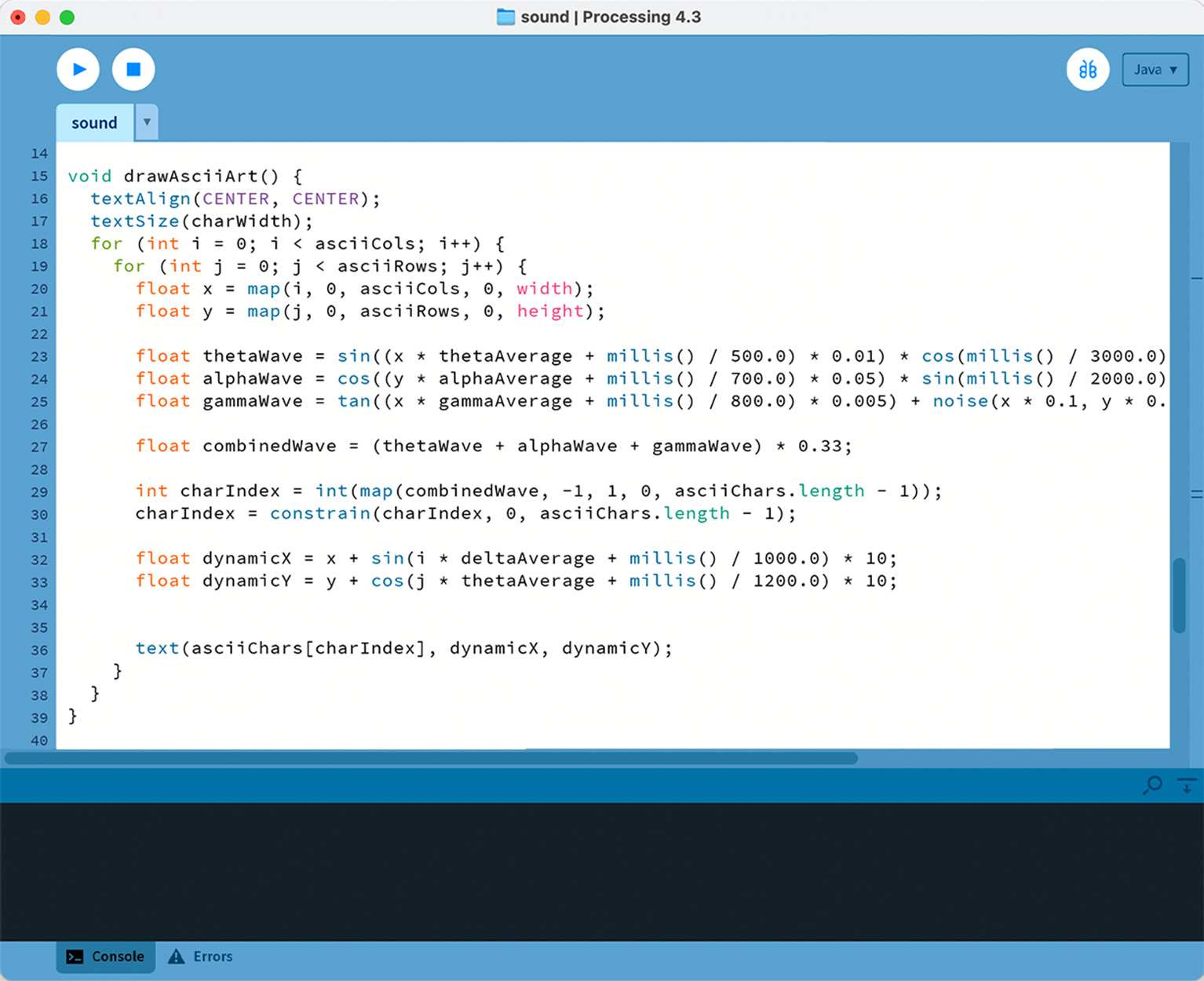

The grid spread across 120 columns and 60 rows, becoming a canvas where each cell carries a single character. The selection of each character isn’t random; theta, alpha, and gamma waves are applied through sine, cosine, and tangent calculations, together creating moving patterns. These layered waves create rippling shifts across the grid, which I think visuallt reflects the fluidity of EEG data.

Another factor of the movements comes from subtle shifts in character positions. The theta and delta values nudge the characters slightly along their x and y axes. It looks like a change in the kerning and line height! This motion adds depth to the grid, bringing an almost tactile quality just from the change in the modular display.

Outcome